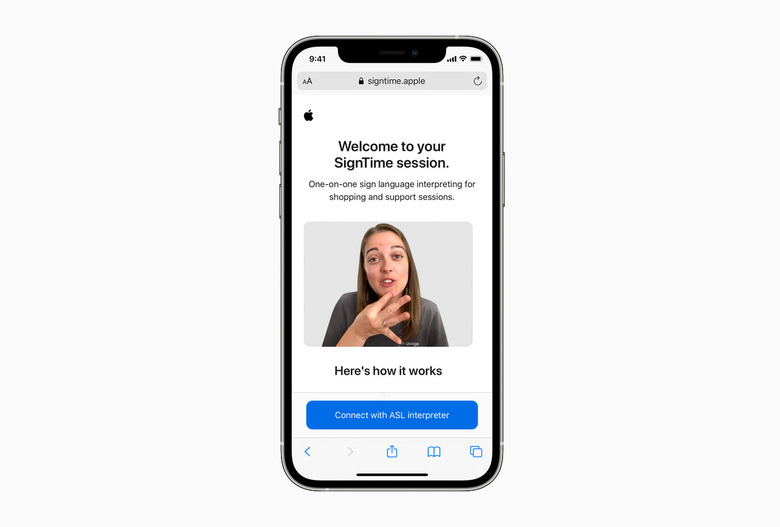

Apple's SignTime brings up a sign language interpreter on demand

Eye-tracking for iPads, bi-directional hearing aids and AssistiveTouch for watchOS are coming too.

Apple has long taken accessibility seriously. Just spend five minutes in the relevant section of your iPhone's settings and you'll find a veritable trove of helpful settings — many of them useful to everyone. Today, the company is ready to announce a host of new tools across its ecosystem of products.

Perhaps the most impactful is SignTime. Initially, SignTime will be available for the most obvious ways of contacting the company: customer service. With SignTime, you will be able to connect with an interpreter via your phone or browser with sign language assistance in real-time. Customers have been able to arrange an in-store visit with a sign language interpreter, but with SignTime you will also be able to go directly into a store at any time and use the service to make purchases or seek technical assistance. SignTime launches tomorrow and will be available in American, British and French sign languages. More languages will be added in the future.

If you own an Apple Watch (or wanted one, but were worried about your ability to use it), then the introduction of AssistiveTouch for the wearable will be welcome news. AssistiveTouch has been available on the iPhone for a while now, but with AssistiveTouch on the watch, you'll be able to navigate and perform core tasks — like answering a call or starting a workout — with a one-handed gesture. Think things like making a fist or a pinching gesture to trigger an action and operate an on-screen cursor. Though it's not clear at this time how specific different abilities will affect things. If your wrists aren't as dexterous or pinching isn't an option and so on.

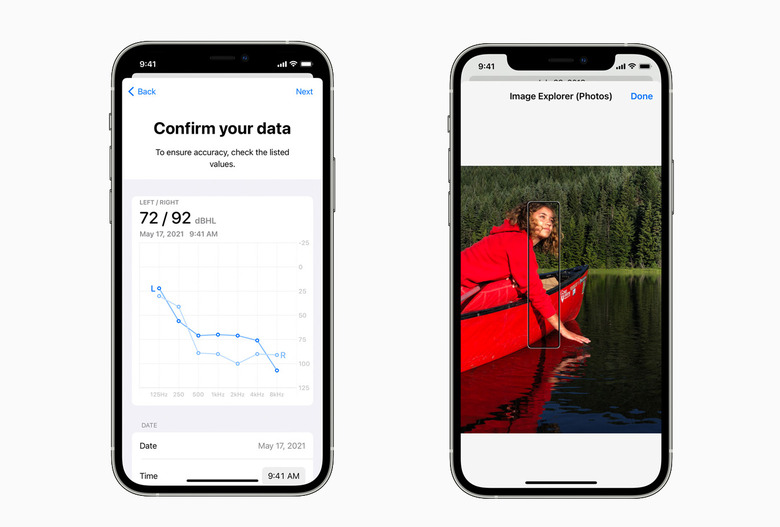

The iPad gets its own special addition, which is the implementation of eye-tracking at the OS level. With a compatible MFi accessory, you'll soon be able to control your tablet with your gaze alone. Apple says there are third-party partners onboard and compatible devices will be available later this year. Use your eyes to move the cursor and hold your gaze to click. Simple.

For those with hearing impairment, new bi-directional hearing aids should mean making and receiving calls gets a little more seamless. Apple was an early pioneer in making the hearing aid experience with an iPhone much smoother. Its unique implementation of Bluetooth meant "made for iPhone" hearing aids could easily connect to your iPhone in a way that wasn't possible on Android. Google's operating system has caught up some in recent years, but it's still hit and miss.

The "bi-directional" part simply means that compatible hearing aids with microphones in them can be used much like how you'd use AirPods when on a call. No need to touch the phone or use its mic, just answer the call and both sides of the conversation are handled in-ear.

If your accessibility issue is vision-related, VoiceOver on the iPhone is going to become a lot more robust. Right now, your handset can tell you what's on-screen, even describing what's in the image, but not much more beyond that. In the next iteration, it'll be able to tell you more specific information, such as the color of the dog in the photo or what's around it. If a friend or family member is in the image, and your phone knows who that person is via tags and face recognition, it'll be able to let you know that it's uncle Jerome in a plaid shirt with his golden retriever. Nifty.

There are many more clever tricks incoming, including an improved optical character recognition (OCR) that allows you to indicate which section to read, rather than the phone just reading out all of it, and an new background-sound feature will help those sensitive to external stimuli play a selection of soothing sounds under their media to further block out external noises.

Most of the new features will roll out in the coming months, with SignTime launching tomorrow.