Google makes its AR-centric Depth API available to all developers

Augmented reality just got more realistic.

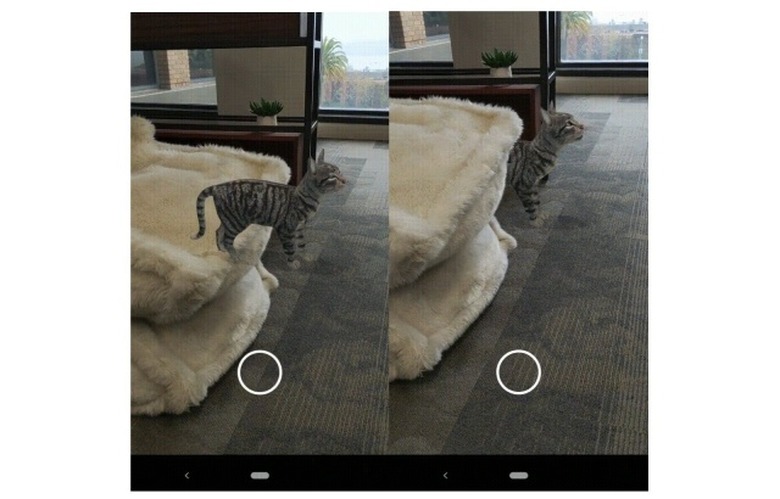

Google initially unveiled its Depth API – part of its augmented reality (AR) toolbox — last December. Incorporating occlusion, 3D understanding and a new level of realism, the feature makes AR objects appear as if they're in your actual space by responding to real-world cues. An AR chair will appear behind a physical table instead of floating atop of it, for example. At launch, the experimental feature was only available to select partners – now it's available to all developers on Android and Unity.

The AR Core Depth API has a lot of applications. Illumix's game Five Nights at Freddy's AR: Special Delivery uses it so that characters can hide behind objects for more startling jump scares. TeamViewer Pilot, meanwhile, uses it to more precisely enable AR annotations on video calls. Remember Snapchat's dancing hotdog lens? That's also now using Google's Depth API.

There's no special hardware involved, the API will work with all compatible Android devices running Android or Unity, and developers can get to work using open sourced code on GitHub. For end-users, there are already a number of depth-enabled AR experiences to try out, such as Lines of Play, which lets you design elaborate domino creations, topple them over and watch them collide with the furniture and walls in your room. Google says that more experiences using surface interaction will follow later this year, including SKATRIX, a new game from Reality Crisis that turns your home into a digital skate park, and SPLASHAAR from ForwARdgames, which lets you to race your friends as AR snails over surfaces in your room.