MIT gives soft robots a better sense of touch and spatial awareness

Teams added balloon-based sensors and cameras to robotic grippers.

You know robotic grippers are getting advanced when they can pick up a potato chip without crushing it. In order to do that, they need tactile sensing and proprioception — an awareness of where they are in space. This kind of sensing has been absent in most soft robots, but now two teams from MIT have solutions that could change that. Their research may enable soft robots to better sense what they're gripping and how much force to use.

One team built off previous research from MIT and Harvard University in which researchers developed a soft, cone-shaped robotic gripper that collapses on objects like a Venus flytrap and can pick up items 100 times its weight. The new team improved upon that "magic ball gripper" by adding sensors that allow it to pick up items as delicate as a potato chip and to classify them so the gripper can recognize them in the future.

The team added tactile sensors made from latex "bladders," aka balloons, connected to pressure transducers. An algorithm uses feedback to let the gripper know how much force to use. So far, the team has tested the gripper-sensors on items ranging from heavy bottles to cans, apples, a toothbrush and a bag of cookies.

"We hope they provide a new method of soft sensing that can be applied to a wide range of different applications in manufacturing settings, like packing and lifting," said MIT postdoc Josie Hughes, the lead author on a new paper about the work.

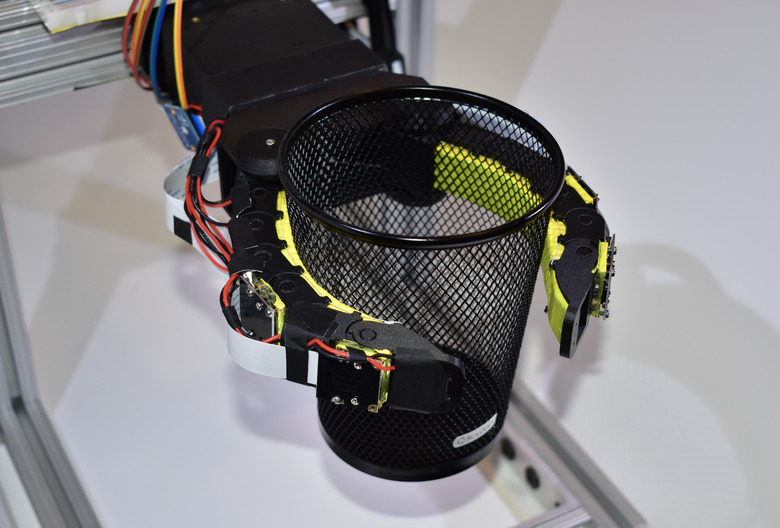

Meanwhile, a second group of MIT researchers created a soft robotic finger called "GelFlex" that uses embedded cameras and deep learning to create tactile sensing and proprioception. The gripper looks like a two-finger cup gripper you might see in a cup holder. Each finger has one camera near the fingertip and another in the middle of the finger. The cameras observe the status of the front and side surface of the finger, and a neural network uses info from the cameras for feedback. This allows the gripper to pick up objects of various shapes.

"Our soft finger can provide high accuracy on proprioception and accurately predict grasped objects, and also withstand considerable impact without harming the interacted environment and itself," said Yu She, lead author on a new paper on GelFlex.

Both teams will present their papers at the 2020 International Conference on Robotics and Automation.