'Predictive policing' could amplify today's law enforcement issues

And we thought automated facial recognition was bad.

Law enforcement in America is facing a day of reckoning over its systemic, institutionalized racism and ongoing brutality against the people it was designed to protect. Virtually every aspect of the system is now under scrutiny, from budgeting and staffing levels to the data-driven prevention tools it deploys. A handful of local governments have already placed moratoriums on facial recognition systems in recent months and on Wednesday, Santa Cruz, California became the first city in the nation to outright ban the use of predictive policing algorithms. While it's easy to see the privacy risks that facial recognition poses, predictive policing programs have the potential to quietly erode our constitutional rights and exacerbate existing racial and economic biases in the law enforcement community.

Simply put, predictive policing technology uses algorithms to pore over massive amounts of data to predict when and where future crimes will occur. Yes, just like Minority Report. These algorithms can guesstimate the times and locations of crimes, the potential perpetrators, and even their upcoming victims based on a variety of risk factors. For example, if the system recognizes a pattern of physical altercations outside a bar every Saturday at 2am, it could suggest increasing police presence there at that time to prevent the fights from occurring.

Predictive policing in and of itself is nothing new. It's the straightforward evolution of intelligence-driven techniques, based on long established criminology principles that have been used by law enforcement for decades. The idea of forecasting crimes started back in 1931 when University of Chicago sociologist Clifford R. Shaw and Henry D. McKay, a criminologist at Chicago's Institute for Juvenile Research, published a book examining why juvenile crime persisted in specific neighborhoods.

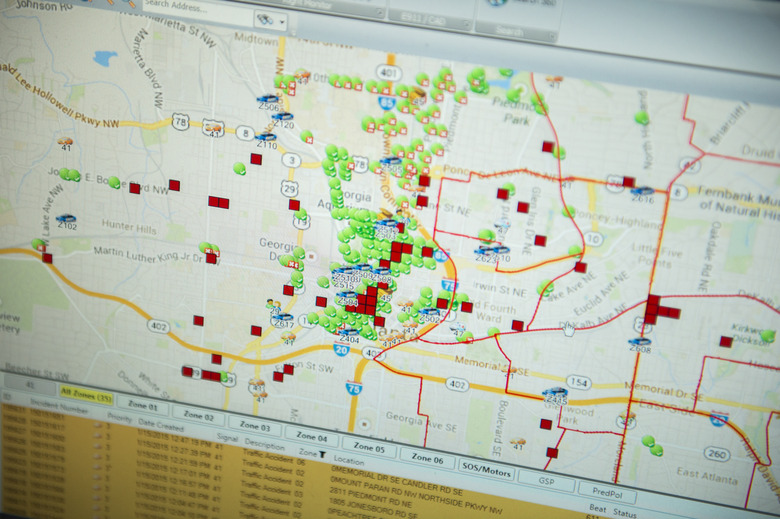

By the 1990s, organizations like the National Institute of Justice (NIJ) began leveraging geographic information system tools to map crime data and advanced mathematical models to guess where crime was most likely to occur. Today, law enforcement agencies and the private companies who develop predictive algorithms utilize cutting edge, computer driven models that can tap into massive stores of data and information. This is the era of big data policing.

There are three primary types of predictive policing, Professor Andrew Ferguson of American University, Washington College of Law, explained to Engadget. "There is place-based predictive policing, which is essentially taking past crime data using an algorithm or machine learning model to try to predict where the particular kind of crime will occur in the future," he said. There is also person-based prediction. "It's about people, about individuals who are more at risk, looking at factors in their backgrounds, their criminal history, arrests," Ferguson continued. "Those kinds of input determine whether or not someone is more likely to commit a crime." Finally, there is group-based prediction that examines, he said, "patterns or networks of individuals who are connected in a certain way, that are more likely as a group to commit a crime."

"By and large, all three types of predictive policing have generally been used to target poor people and people who are involved in sort of the lower level crimes — property crimes, burglaries, car thefts," Ferguson conceded. However, white collar criminals shouldn't celebrate just yet. The FTC may not refer to their algorithms as "predictive policing" tools but "a lot of the insider trading investigations are all done based on algorithms," he continued. They're "all based on using data to identify people who might be insider trading, people might have had tips."

One of the first agencies to adopt a predictive policing system in the modern era was the Los Angeles Police Department. In 2006, the LAPD was still using hot spot maps based on data from past crimes to determine where to allocate police presence. That year, the LAPD partnered with researchers from UCLA, led by anthropologist Jeffrey Brantingham, to develop a more predictive, rather than reactive, model. Brantingham and postdoctoral scholar George Mohler adapted seismological models for their cause. "Crime is actually very similar," Brantingham todl Science Magazine in 2016 because, like bar fights at 2am on Saturdays, earthquakes happen in fairly regular intervals along well-established faults.

This theory has proven surprisingly accurate. As Professor Andrew Ferguson of American University, Washington College of Law, notes in his 2012 study, Predictive Policing and Reasonable Suspicion, "half of the crime in Seattle over a fourteen-year period could be isolated to only 4.5 percent of city streets. Similarly, researchers in Minneapolis, Minnesota found that 3.3 percent of street addresses and intersections in Minneapolis generated 50.4 percent of all dispatched police calls for service. Researchers in Boston found that only 8 percent of street segments accounted for 66 percent of all street robberies over a twenty-eight-year period."

Brantingham and Mohler's model has since been developed into a proprietary software package, known as PredPol, which has been adopted by police departments across the country. This system reportedly looks at a narrow set of related statistics, giving additional weight to more recent events, to predict where and when crimes will occur during a given officer's shift within a 150m by 150m square. PredPol claimed in 2015 that if officers spend just 5 – 15 percent of their shifts patrolling in that box, they'll stop more criminals than if they relied on their own instincts. For this capability, departments pay anywhere from $10,000 to $150,000 annually.

Brantingham's team published their study in the Journal of the American Statistical Association in 2015. It found that the system led to "substantially lower" crime rates during the 21-month test period. "Not only did the model predict twice as much crime as trained crime analysts predicted, but it also prevented twice as much crime," Brantingham told a UCLA reporter.

"In much the same way that your video streaming service knows what movie you're going to watch tomorrow, even if your tastes have changed, our algorithm is constantly evolving and adapting to new crime data," he continued.

However not more than four years later, the PredPol system has been abandoned by numerous departments because, as Palo Alto police spokeswoman Janine De la Vega told the LA Times in 2019, "we didn't find it effective. We didn't get any value out of it. It didn't help us solve crime."

The NYPD, America's largest police force, was another early adopter of predictive policing algorithms. The department trialled systems from three firms — Azavea, KeyStats, and PredPol — before developing its own algorithm suite inhouse in 2013. As of 2017, the NYPD used algorithms to forecast shootings, burglaries, felony assaults, grand larcenies, grand larcenies of motor vehicles, and robberies.

The Chicago PD has also dabbled in predictive policing, which it calls a "heat list." In 2012, It partnered with researchers at the Illinois Institute of Technology to develop an algorithm. They based their model off of work out of Yale, which used an epidemiological model intended to track the spread of contagious diseases, and adapted it to track the spread of gun violence instead.

As of 2017, the CPD claims that their algorithm is effective. From January to July of that year, the number of shootings in the 7th District dropped 39 percent year-over-year and the number of murders dropped 33 percent while the number of murders citywide inched up 3 percent overall.

Despite those rosy self-reported statistics, predictive policing poses many of the same civil and constitutional risks that we've seen in other algorithmic law enforcement schemes like automated facial recognition. For example, PredPol's location-based recommendations don't tell officers who to look out for, only where and when. "Those predictions shouldn't have any direct impact on the Fourth Amendment freedoms of individuals who happen to be there," Ferguson said. "But because police officers are human beings and they're getting extra information about an area, it seems likely that that kind of information will prime them to see suspicion in times and places when maybe they wouldn't otherwise be suspicious."

That's a problem, in part, because what the law considers "reasonable suspicion" is very much at the discretion of officers and judges. Terry v. Ohio (1968) established that, for an officer to have "reasonable suspicion" for a stop, they must "be able to point to specific and articulable facts which, taken together with rational inferences from those facts, reasonably warrant that intrusion." That in itself is a predictive act on the part of the officer. They're taking an incomplete set of data, analyzing it internally and making a guess as to whether or not they'll find incriminating evidence if they proceed.

"Because the Fourth Amendment standard is so malleable, and it can take in any kind of totality of factors," Ferguson said, "the idea that an algorithm has helped shape the police officer's view of the neighborhood or given area is clearly a concern."

Additionally, since these algorithms are trained using data produced by the police, implicit biases held by those departments can worm their way into the output recommendations. "They're not predicting the future," William Isaac, an analyst with the Human Rights Data Analysis Group and a Ph.D. candidate at Michigan State University in East Lansing, told Science Magazine. "What they're actually predicting is where the next recorded police observations are going to occur."

As Professor Ferguson points out, these systems are only as reliable as the data they're fed. PredPol, for example, only uses calls for service for three specific crimes — burglary, vehicle break ins and grand theft auto — as its data set. Whether the police suspect a burglary has occurred or arrested someone under suspicion of burglary have no bearing on the system's recommendations. It only logs when a member of the public has reported a crime. This helps prevent biases within the police force from corrupting the recommendations' validity.

The same cannot be said for the systems developed in-house by police forces. "In person-based policing in Chicago, LA and even New York, they use arrests as part of their input for risk," Ferguson noted. "And arrests are where police are, not necessarily what people did."

"You get arrested for a lot of things that you're not convicted for," he continued. "So if you use something like arrests, you are building a system that's going to launder the bias of police data into your algorithm."

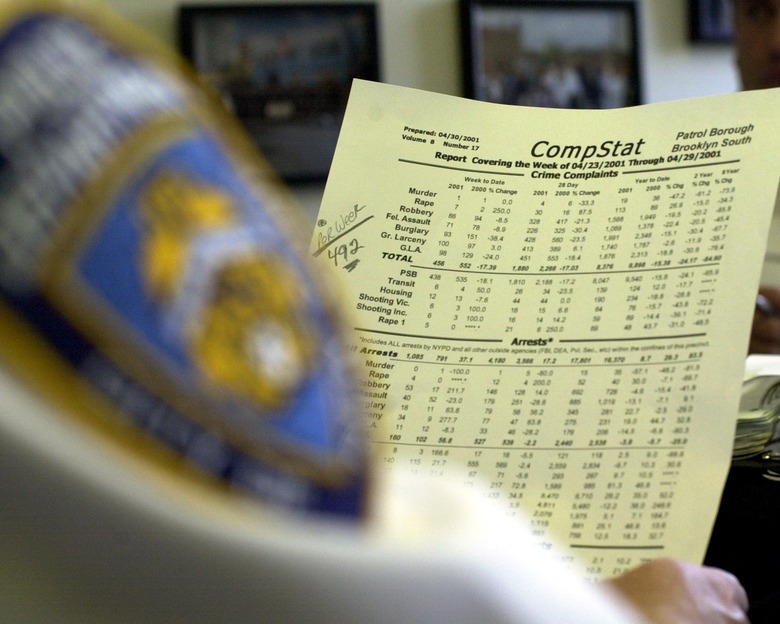

And it's not as if the police aren't already juking their stats to fit desired narratives. In 2010, Time reported that, "more than a hundred retired New York Police Department captains and higher-ranking officers said in a survey that the intense pressure to produce annual crime reductions led some supervisors and precinct commanders to manipulate crime statistics, according to two criminologists studying the department."

This survey set off the Compstat fiasco. Subsequent investigations found that precinct commanders would cruise active crime scenes persuading victims to not file reports so as to artificially reduce serious crime statistics while officers would plant drugs on suspects to artificially raise narcotics arrests — all to goose reported numbers into making it appear as if serious crime was declining in the city. Following a flurry of lawsuits, a Federal court found that the NYPD had utilized unconstitutional and racially biased policing practices for more than a decade. The court ordered the NYPD to undergo systemic reforms whose compliance would be verified by a federal court.

"It is a common fallacy that police data is objective and reflects actual criminal behavior, patterns, or other indicators of concern to public safety in a given jurisdiction," a team of researchers from New York University wrote in their 2019 study, Dirty Data, Bad Predictions. "In reality, police data reflects the practices, policies, biases, and political and financial accounting needs of a given department."

Today, predictive policing systems face an uncertain future. Citing this week's Santa Cruz ban, Ferguson notes that "this was almost a full reversal of a city that not only believed in predictive policing but was the face of predictive policing nationally. They were the ones pushing this as the innovative, next new thing in 2011, 2012, and getting a lot of positive attention from it."

"I think it says something. I think it says where we are in our trust of policing technology," he continued. Ferguson points to the recent protests in LA for police reform as having an outsized impact on the use of this technology. The LAPD has since canceled its contract with PredPol and shelved LASER, its person-based predictive system as well. Even Chicago backed off its use of the heat list earlier this year in the face of public pressure.

"The height of predictive policing has, I think passed," Ferguson said "It's now on a bit of a downturn."