Google AI learns how to play soccer with a virtual ant

DeepMind can solve some truly unusual computing problems.

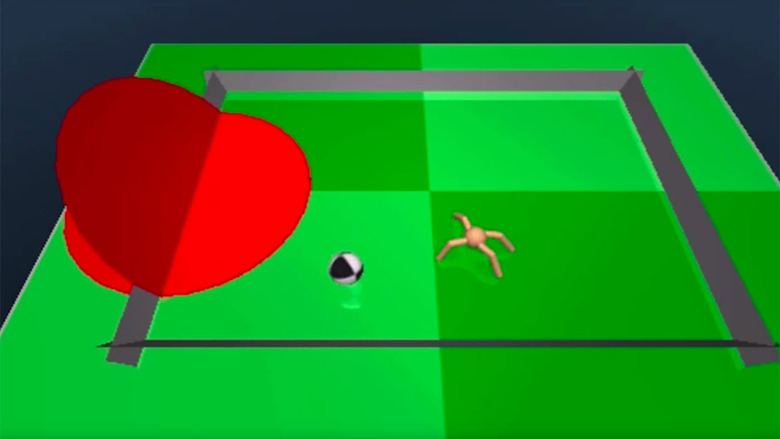

Google's DeepMind has conquered some big artificial intelligence challenges in its day, such as defeating Go's world champion and navigating mazes through virtual sight. However, one of its accomplishments is decidedly unusual: it learned how to play soccer (aka football) with a digital ant. It looks cute, but it's really a profound test of DeepMind's asynchronous, reinforcement-based learning process. The AI has to not only learn how to move the ant without any prior understanding of its mechanics, but to kick the ball into a goal. Imagine if you had to learn how to run while playing your first-ever match — that's how complex this is.

Google doesn't explain the significance in-depth, but it's quick to mention that this could be very helpful for "robotic manipulation." A legged robot could start walking (or adapt to unforgiving conditions) without receiving explicit instructions, and a robot arm could safely grab unfamiliar objects. Between this and Google's other DeepMind research, the building blocks of AI-driven robotics are slowly coming into place.