Google's mini radar can identify virtually any object

Project Soli just needs to get close to an item to determine what it is.

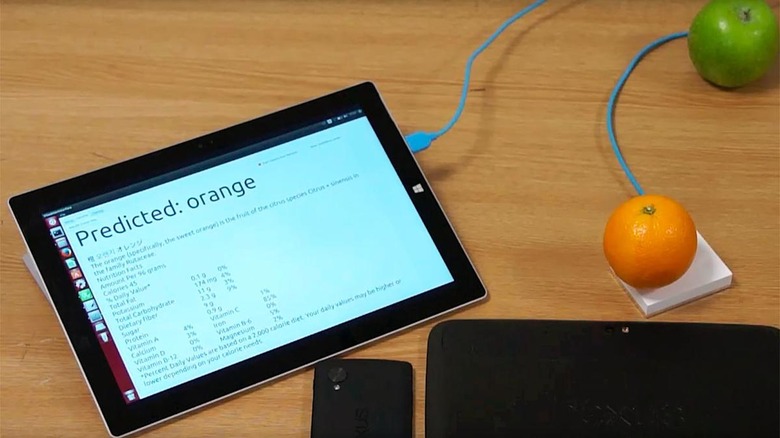

Google's Project Soli radar technology is useful for much more than controlling your smartwatch with gestures. University of St. Andrews scientists have used the Soli developer kit to create RadarCat, a device that identifies many kinds of objects just by getting close enough. Thanks to machine learning, it can not only identify different materials, such as air or steel, but specific items. It'll know if it's touching an apple or an orange, an empty glass versus one full of water, or individual body parts.

It doesn't take much to realize that the potential for computing breakthroughs is significant. Your phone could perform different actions depending on how and where you hold it. You might get a different interface if you're wearing gloves, for instance. A restaurant would know to provide a refill the moment your drink is empty, and the blind could identify products in a store. It could be particularly useful for automatic sorting in farms and waste facilities, as well. The biggest obstacle is translating RadarCat from a clever concept to a practical product — that could take a while.