Facebook swaps fake article flags for fact-checked links

The warning labels weren't stopping people from reading bogus articles.

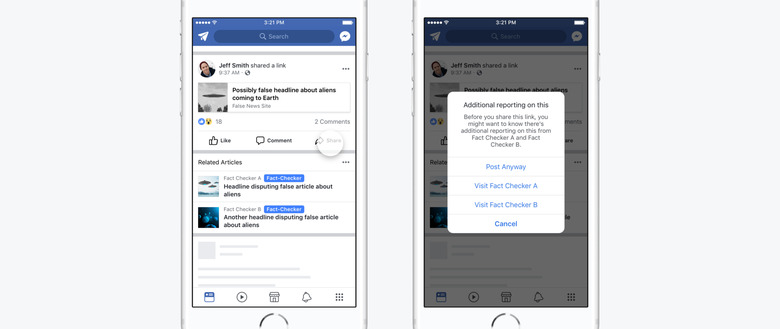

After getting dragged around the time of the general election, Facebook has spent much of this year taking steps to combat the spread of misinformation on its site. Transparency has been a staple of its mission, and so it's kept the public up to date with all the features and experiments it's juggling. One of these is the disputed flags stamped on articles identified as false — first spotted back in March. But, it seems the feature isn't working as Facebook would've liked, which means it has to go. In a new post, the company claims it is ditching disputed flags in favor of an improved version of its related articles feature, which it originally began testing in April.

Seeing as fake news has become a rallying cry in itself, some people just don't buy the company's label (even if it comes by way of two third-party fact checkers). As evidence, Facebook points to a 2012 academic study (co-authored by researchers from the University of West Australia and University of Michigan) that suggests a strong image — like a red flag — next to an article may entrench held beliefs, instead of debunking them. Why it didn't consult the study in the first place is a mystery.

Facebook boils down the feature's failure to four factors: it was overly complicated for users, it didn't always have the intended end result, it took too long to implement worldwide, and was too stringent in its labelling. In comparison, the addition of related articles below a hoax resulted in less people sharing it, although click-throughs remained unchanged.

The social network has other (more successful) tools in its arsenal as well. Burying bogus items at the bottom of the News Feed typically results in the demoted info losing 80 percent of traffic, according to Facebook. As reports have shown, fake news is big business for elaborate troll farms in Russia and parts of Europe. Butchering their reach gives the nefarious folks behind the misinformation less of a financial incentive to create it, adds the company.