OpenAI’s new system lets you train robots entirely in VR

Humans need only virtually perform a task once for the ‘bot to fully learn it.

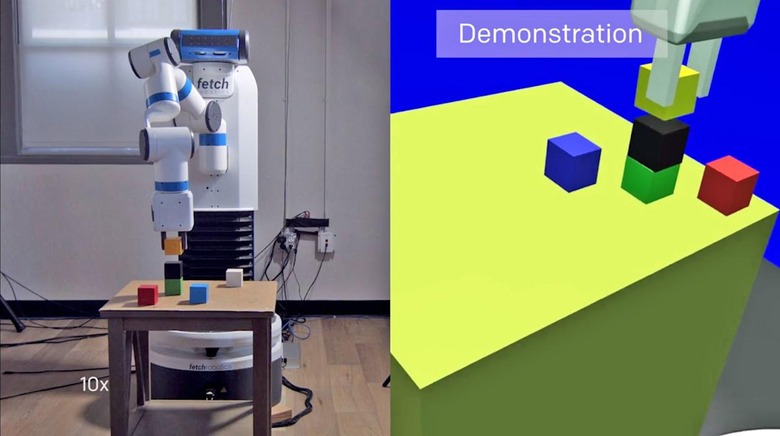

Elon Musk's artificial intelligence platform OpenAI introduced a new program to train robots entirely in simulation. Now they've added a new algorithm, named one-shot imitation learning, which will only require humans to demonstrate a task once in VR for a robot to learn it.

[embed=https://www.facebook.com/plugins/video.php?href=https%3A%2F%2Fwww.facebook.com%2Fopenai.research%2Fvideos%2F642411485947467%2F&show_text=0&width=560]

The system is powered by two neural networks. The first takes a camera image and determines objects' spatial position in relation to the robot — but it was trained only with a host of simulated images, meaning it was taught how to interact with the real world before it ever actually saw the real world. The second imitates tasks shown by the demonstrator by scanning through recorded action and paying attention to frames that tell it what to do next.

This training model is only a prototype, but teaching robots entirely in simulation could allow researchers to train them for complex tasks without needing physical elements at all. That would let humans safely and easily approximate extreme environments like arctic waters or areas soaked in nuclear radiation — or even other planets.