Apple's new iPhones use AI 'Portrait Lighting' to improve shots

It can detect your facial contours to create more flattering or dramatic photos.

Apple's iPhone 8 and 8 Plus cameras are similar to those on the previous models, and the new iPhone X has a similar dual camera arrangement to the 8 Plus. While the hardware hasn't changed dramatically, there are some interesting new software features, though, most notably one called "Portrait Lighting." Taking advantage of the camera's multi-focus feature, it can separate you from the background and blur it out, as before, but now uses AI to examine the contours of your face and "light" you in a variety of flattering or dramatic ways.

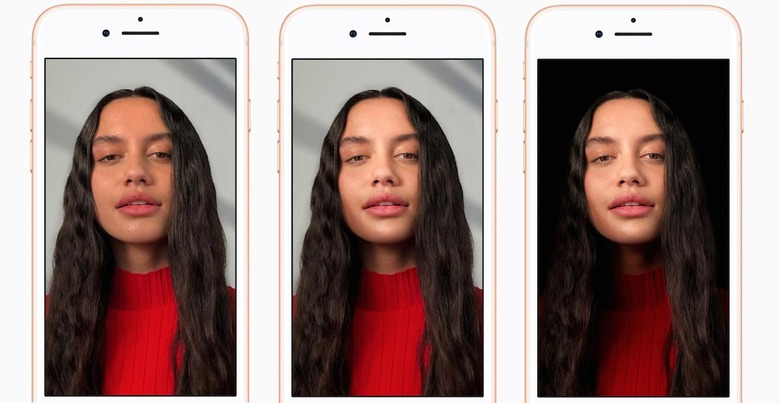

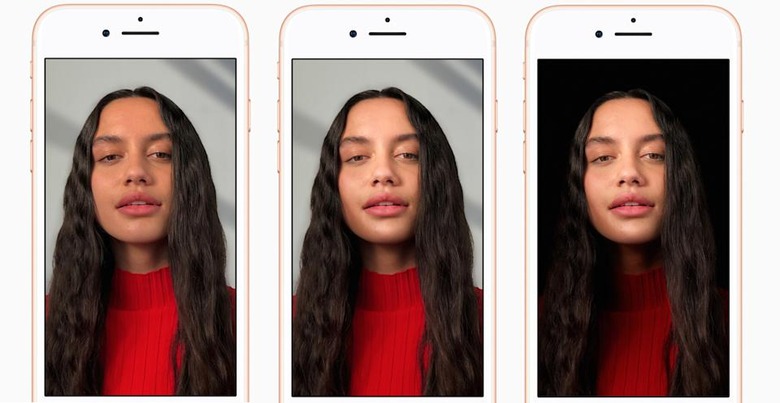

For the same shot, for instance, you can create different effects like "Natural Light," "Contour Light" and "Stage Light" (shown left to right, below). "You compose a photo, the dual cameras and the ISP sense the scene, they create a depth map, and they actually change the lighting contours over the face," said Apple iPhone chief Phil Schiller. "These aren't filters, this is real time analysis."

Apple had promised to shrink down its AI systems onto a chip for use with its augmented reality ARKit and other things, and using it with the camera seems a smart choice. Assuming it doesn't make shots look artificial in real world use (it looked great in the demo), it should be a handy feature.

The new camera shoots a lot quicker too, thanks to a new image signal processor that's part of the A11 Bionic chip. It improves pixel processing, captures more colors, speeds autofocus and adds a new feature called "hardware-enabled multi-band noise reduction" that can eliminate a lot of the grain you'd see in low light. "Phone 8 takes fantastic portrait modes, and now you're going to get more detail and even a more natural bokeh," says Schiller.

Video also gets a boost, with 60fps 4K and 240fps slow-mo available at 1080p. There's also a new, third video category called "augmented reality." Since video is a key part of its ARKit platform, it should help developers create even more of the wild experiments going on right now.

Follow all the latest news from Apple's iPhone event here!