Experimental drone uses AI to spot violence in crowds

Whether or not it works well in practice is another story.

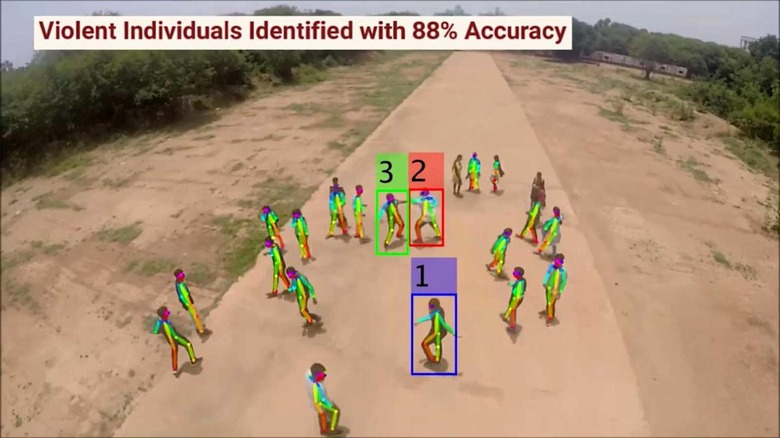

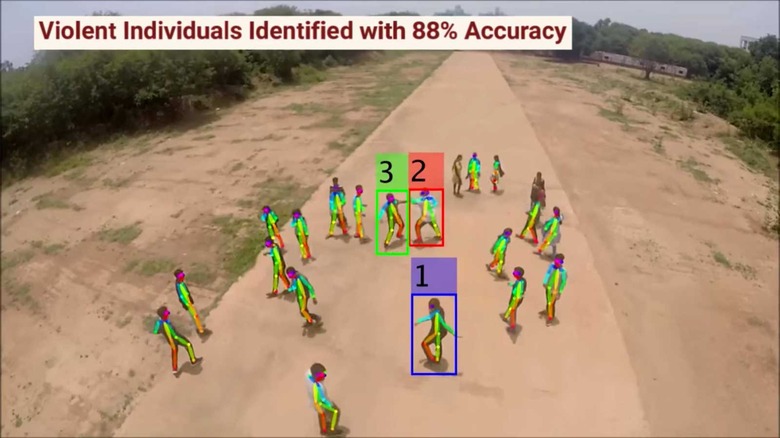

Drone-based surveillance still makes many people uncomfortable, but that isn't stopping research into more effective airborne watchdogs. Scientists have developed an experimental drone system that uses AI to detect violent actions in crowds. The team trained their machine learning algorithm to recognize a handful of typical violent motions (punching, kicking, shooting and stabbing) and flag them when they appear in a drone's camera view. The technology could theoretically detect a brawl that on-the-ground officers might miss, or pinpoint the source of a gunshot.

As The Verge warned, the technology definitely isn't ready for real-world use. The researchers used volunteers in relatively ideal conditions (open ground, generous spacing and dramatic movements). The AI is 94 percent effective at its best, but that drops down to an unacceptable 79 percent when there are ten people in the scene. As-is, this system might struggle to find an assailant on a jam-packed street — what if it mistakes an innocent gesture for an attack? The creators expect to fly their drone system over two festivals in India as a test, but it's not something you'd want to rely on just yet.

There's a larger problem surrounding the ethical implications. There are questions about abuses of power and reliability for facial recognition systems. Governments may be tempted to use this as an excuse to record aerial footage of people in public spaces, and could track the gestures of political dissidents (say, people holding protest signs or flashing peace symbols). It could easily combine with other surveillance methods to create a complete picture of a person's movements. This might only find acceptance in limited scenarios where organizations both make it clear that people are on camera and with reassurances that a handshake won't lead to police at their door.