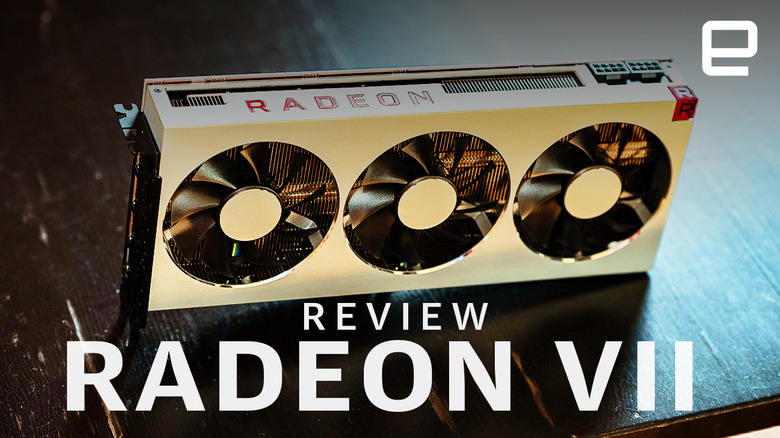

AMD Radeon VII review: Is 4K gaming enough?

AMD's new flagship graphics card is fast, but expensive.

When AMD announced it was developing new GPUs for data centers in mid-2018, it was clear they weren't intended for gaming. AMD was in a tough spot: NVIDIA was gearing up to release its RTX cards with ray-tracing and AI-powered tech that AMD couldn't compete with. The feeling was that AMD had decided to cede the high-end to NVIDIA and focus on the mid-range (where most sales are). A new high-end gaming card wasn't expected for another year at least.

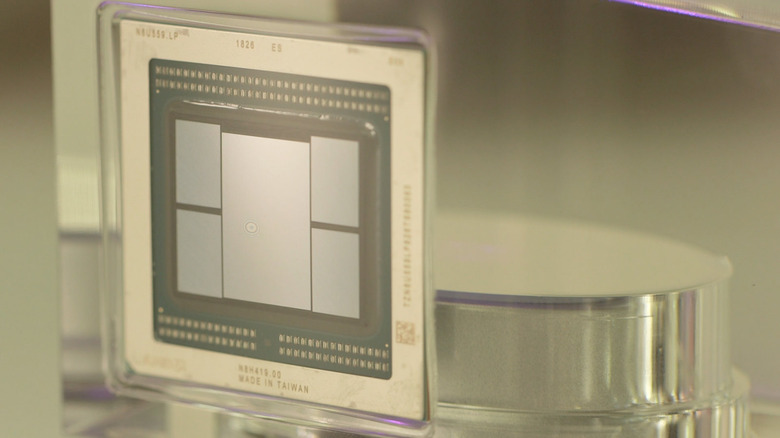

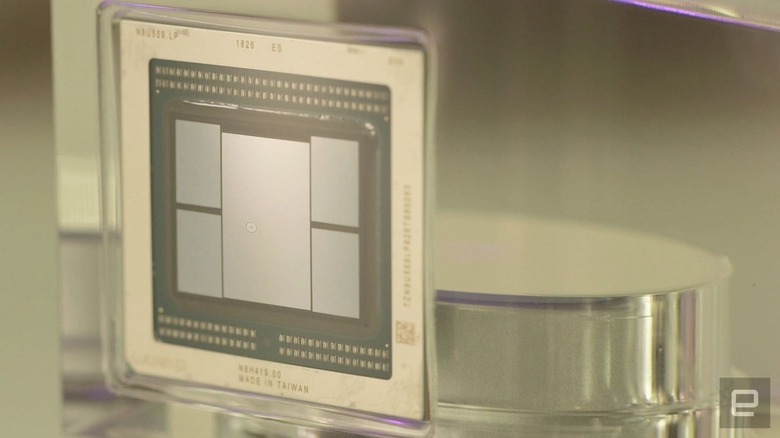

These data-center cards, the Instinct MI60 and MI50, took AMD's previous flagship gaming chip (named Vega 10) and shrunk the transistors from 14nm to a 7nm process. A small manufacturing process makes smaller transistors that can run faster or use less power for the same speed. When the Instinct cards were announced in November, they were a refined version of last years' gaming cards, with enterprise features like error correction and support for super-high-precision math. Take those features away from an Instinct MI50 and you have something that looks very similar to the Radeon VII.

Part of the surprise around the Radeon VII's existence is that the 7nm process is brand new, and generally new fabrication processes are messy. Yields are low, meaning lots of chips come out damaged or non-functional. The Instinct MI50 and 60 are the first GPUs produced with this new process. The appearance of the Radeon VII suggests either 7nm is off to a strong start, or it thought getting back in the high-end graphics game was important enough to cannibalize some of their Instinct cards.

Despite its smaller transistors and refined design, the Radeon VII has a lot in common with last year's Vega 64 and 56 models. The Radeon VII features 60 compute units vs the Vega 64's... well, 64, but its clock speed is 1,400MHz, with a boost speed of 1,750MHz. This is a good deal faster than the Vega 64 at 1,247MHz. There are also improvements in the memory design. Because of the 7nm process, the GPU itself can be smaller. The Radeon VII's GPU is only 331 square millimeters, down from the 14nm Vega 64 at around 500 square millimeters (and both dwarfed by the NVIDIA RTX 2080 Ti at over 750 square millimeters).

This smaller design lets AMD cram another two memory controllers on to the Radeon VII, giving it a whopping 16GB of memory. The memory is speedy, too: AMD is using second-generation high-bandwidth memory, or HBM 2. Compared to the GDDR found on most graphics cards, HBM actually runs at a lower frequency, but it can transfer much more data at a time. The four memory controllers on the Radeon VII give it a memory bandwidth of 1TB per second, the fastest we've ever seen on a GPU. This should help, especially at high resolutions where the card needs to shuffle tons of data-intensive frames around the graphics memory.

| Vega 64 | Radeon VII | |

| GPU | Vega 10 | Vega 20 |

| Base Clock | 1274 MHz | 1400 MHz |

| Boost Clock | 1546 MHz | 1750 MHz |

| Memory | 8GB HBM2 | 16GB HBM2 |

| Memory Bandwidth | 483.8GB/s | 1TB/s |

| Process | 14nm | 7nm |

| Power | 295 Watts | 300 Watts |

The Radeon VII is priced at $699, the same as NVIDIA's RTX 2080. Unfortunately, we weren't able to get our hands on a 2080 for these tests, but we can still get a good sense of how the Radeon VII should perform compared to its green rival. For our tests, we had an older NVIDIA GTX 980 Ti as well as a Vega 64 for competition.

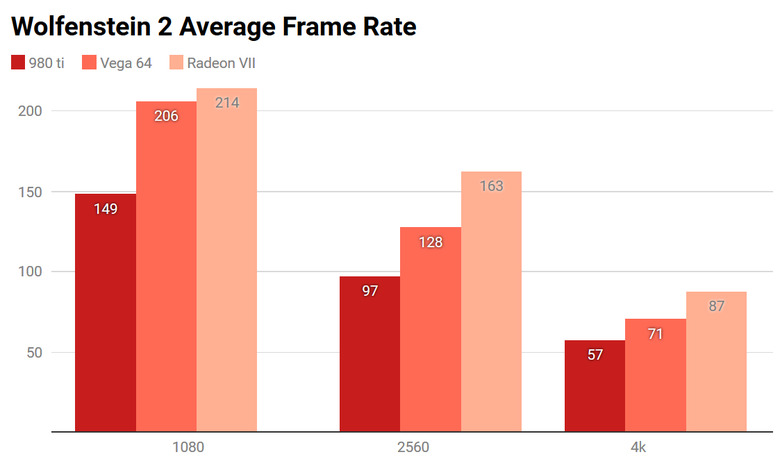

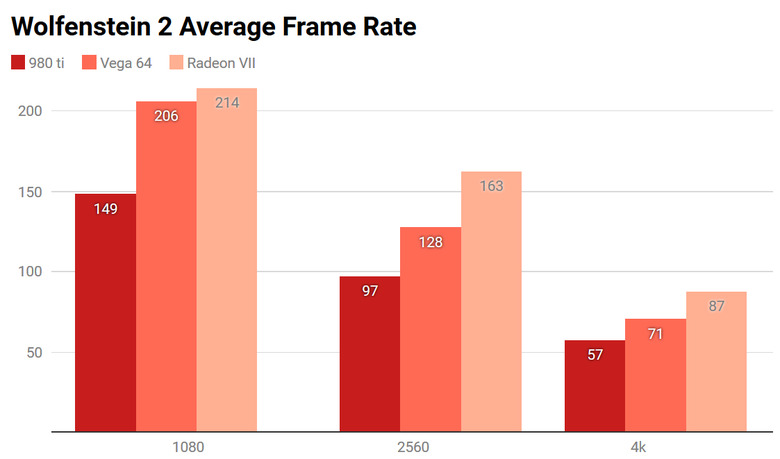

For testing, we ran the Radeon VII through a series of games and a few compute and synthetic tests. First off, we tried Wolfenstein 2: The New Colossus. This is a game built on the Vulkan API, which AMD helped develop and, unsurprisingly, AMD cards did very well here. We tested with every graphics setting at max.

Even at 1080p, the older Vega 64 cracks 200 fps, and at 4K, the Radeon VII is still hitting nearly 90 fps. Most tests of the RTX 2080 we've seen put it around 75 fps at 4K.

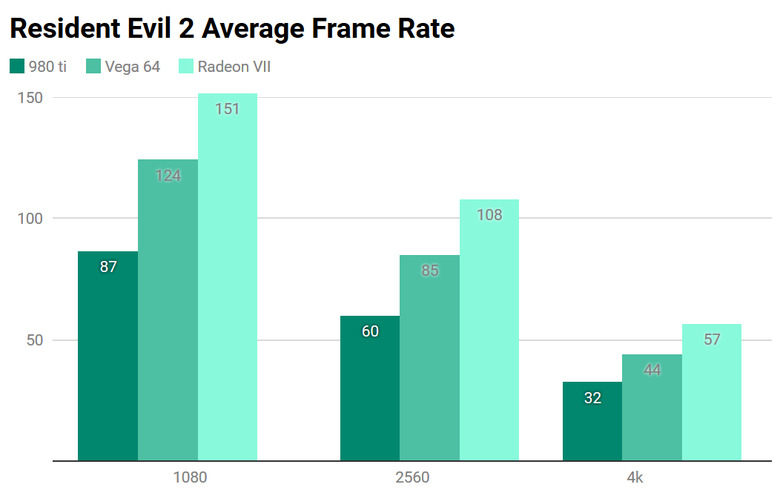

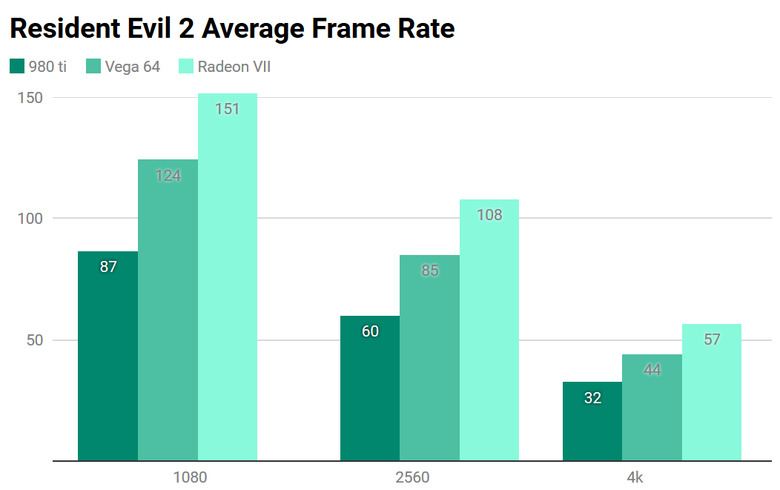

The new remake of Resident Evil 2 is quite graphically intensive and includes options to monitor how much of the GPU's memory is in use. Our poor 6GB 980 Ti maxed out pretty quickly, but the 16GB of memory on the Radeon VII would let you turn everything up to max if you want. Here the Radeon VII stuttered a bit more but managed nearly 60 fps at 4K, and with a couple of settings tweaks, you could get a locked 60 fps. You'd expect a 2080 to come in a few frames ahead, but it'll still be in the same range.

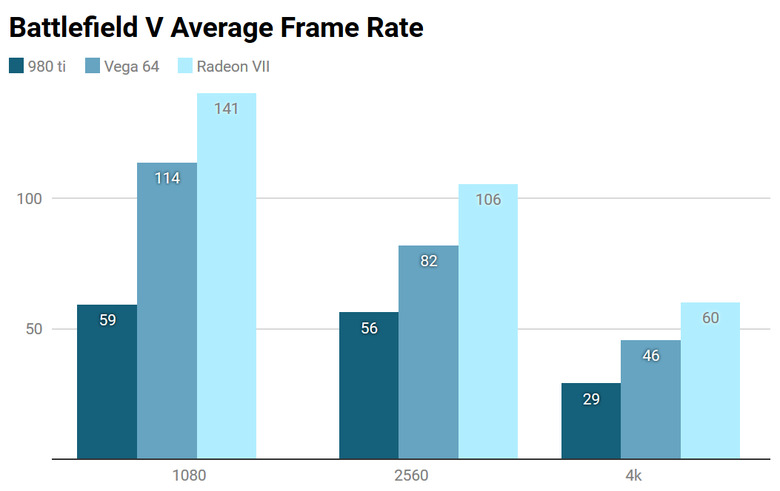

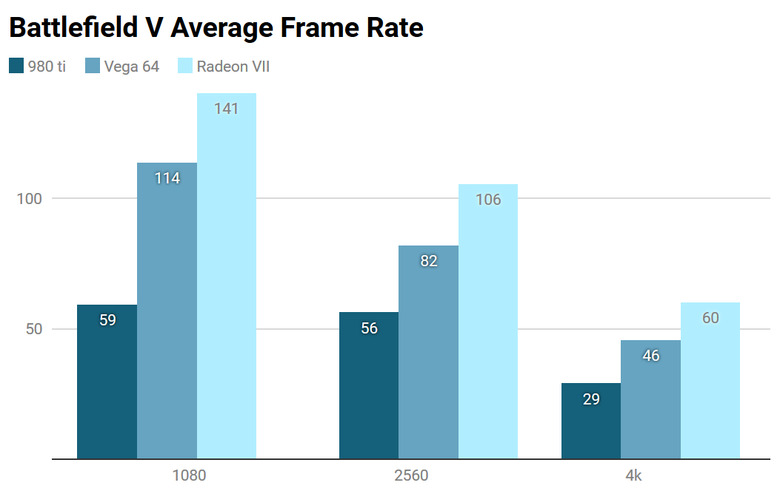

Finally, we tested Battlefield V. EA's latest shooter is currently the only game that supports NVIDIA's RTX ray-tracing technology, which adds super-realistic lighting to the game. AMD doesn't currently have anything like this, but if you can live with a less-reflective game world, it still runs pretty well.

Again, even at 4K and ultra performance settings, the Radeon VII puts out a totally playable 60 fps, though again we'd expect the RTX 2080 to be 10-20 percent ahead of it.

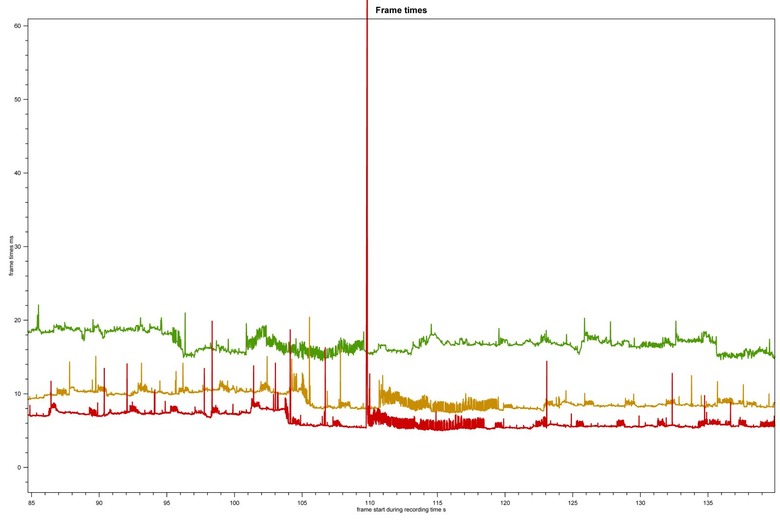

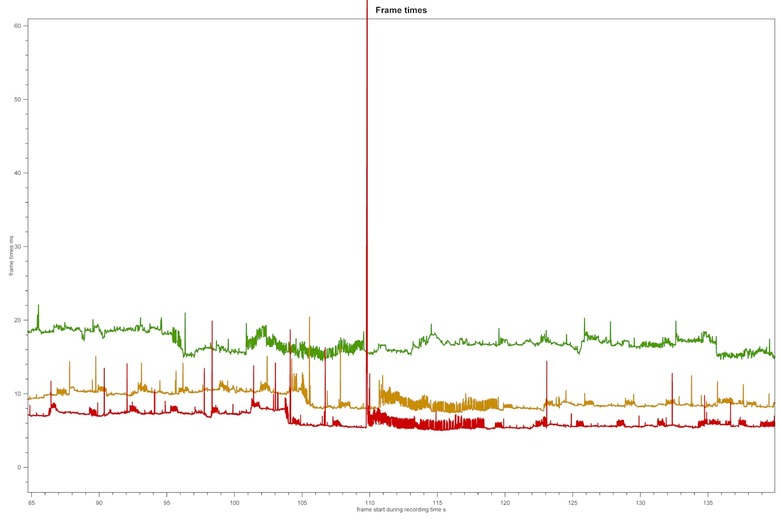

Aside from raw frame rate, frame-time variance is the other measurement that best indicates a smooth gameplay experience. Frames-per-second is an average and doesn't account for the possibility that the frames may be arriving unevenly. To give a hypothetical worst-case scenario, you could have 59 frames arrive within a millisecond, and then have a single frame arrive 998 milliseconds later. This would still average out to 60 frames a second, but you'd experience a moment where the action didn't move for almost an entire second. The reality is never quite this bad, but a high frame variance can lead to stuttering or uneven playback, even with a high frame rate.

The plot above shows Battlefield V, which was the most uneven of the three games. The straighter the line to horizontal, the smoother the game should feel. The vertical lines show where the game was slow to produce a frame or stuttered. Some people claim to be able to notice frames that are as little as 15ms slower than the average, but AMD has claimed in the past that as long as frame times are under 30ms, in general, most players won't notice any variance. In our other two games, the lines stayed well under 30ms, regardless of the resolution.

Beyond gaming, AMD is making a big deal about the Radeon VII's ability to speed up content creation. While most video editing and animation software is still heavily CPU dependent, many are adding GPU accelerated features, including previewing, resizing, effects and exporting. A general rule of thumb is the more you are altering your footage, the more GPU power you'll need, and the higher the resolution, the more graphics memory you'll want.

We put together a series of tests for both Premiere Pro and Davinci Resolve exporting 8K and 4K footage into HD, with and without GPU-accelerated effects added. It's not worth showing the results, though, because the difference between the various cards was negligible. Using no GPU acceleration noticeably slowed down the exports, but the difference between our older 980 Ti and the Radeon VII was only a few seconds. While a good GPU can help with previewing your footage, in most cases, for video editing, a faster processor is going to be more noticeable than a GPU upgrade. Still, there may be some content creation apps out there that can take advantage of all of the Radeon VII's HMB2 memory, and outside of a $2,500 workstation card, the VII is the only way to get 16GB of high-speed graphics memory.

The Radeon VII is undeniably a speedy card, but at $700, it needs to be faster than the RTX 2080, and it doesn't seem like it always is. In addition to being fast, the 2080 supports DLSS and ray tracing, NVIDIA's proprietary graphics technologies, which are impressive — if you can use them. These processing options can boost frame rates and graphical fidelity, but outside of ray tracing in Battlefield V and DLSS anti-aliasing in Final Fantasy XV, there aren't any games that currently support them.

That might be about to change, though, with a number of RTX-enhanced games due in the next few months, including anticipated blockbusters like Anthem and Metro Exodus. If history is any indication, AMD will respond with its own version of these technologies in time, but for now, if you're going to spend $700 on a graphics card, you might want to take advantage of RTX and the new rendering options it brings to the table.

It'd be a different story if the Radeon VII was consistently faster than the 2080. Ray tracing and DLSS may not end up becoming widespread, and if you don't care about RTX technology, then there's nothing wrong with the Radeon VII. Future drivers may improve performance, and if the price drops, it could be a good choice. Either way, unless you need the utmost performance, $700 is a lot to spend for a graphics card, and we'd recommend you wait a few months. There are indications that NVIDIA's RTX cards haven't been selling well, and both companies are dealing with excess inventory — a good sign prices might be due to come down.

There's also the matter of AMD's upcoming Navi cards. The rumors are hard to pin down, but Navi has been developed partly to serve the next generation of game consoles, and we expect it will be a serious revision of AMD's graphics architecture. The Radeon VII shows that AMD is improving its manufacturing, but it feels a bit like a first effort. Let's see what they make next.