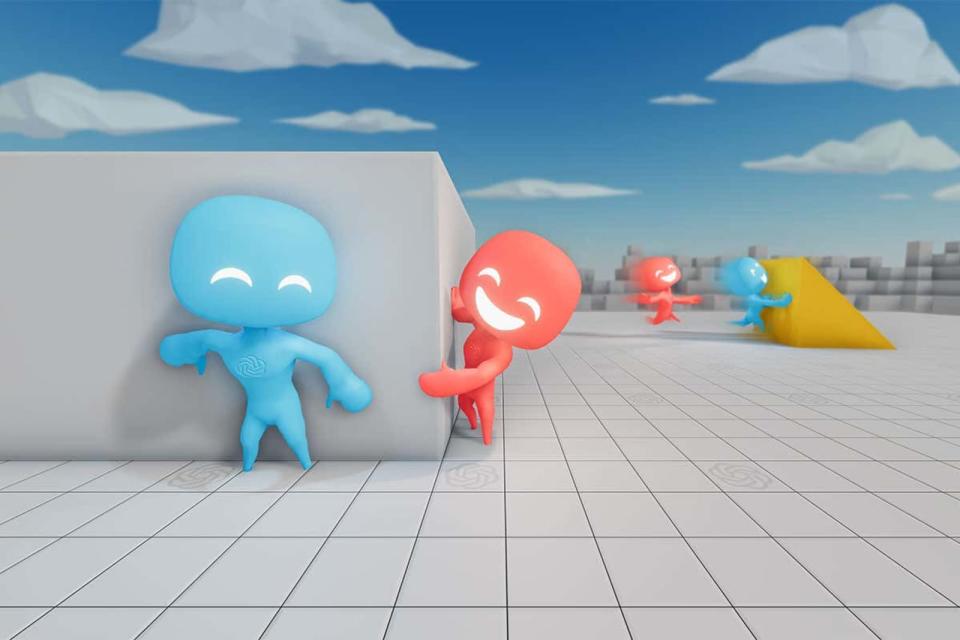

OpenAI experiment proves that even bots cheat at hide-and-seek

The experiment shows how AI can become more sophisticated through competition.

Can artificial intelligence evolve and become more sophisticated when put in a competitive world, similar to how life on Earth evolved through competition and natural selection? That's a question the researchers at OpenAI have been trying to answer through its experiments, including its most recent one that pitted AI agents against each other in nearly 500 million rounds of hide-and-seek. They found that the AI agents or bots were able to conjure up several different strategies as they played, developing new ones to counter techniques the other team came up with.

At first, the hiders and the seekers simply ran around the environment. But after 25 million games, the hiders learned how to use boxes to block exits and barricade themselves inside rooms. They also learned how to work with each other, passing boxes to one another to quickly block the exits. The seekers then learned how to find the hiders inside those forts after 75 million games by moving ramps against walls and using them to get over obstacles. After around 85 million games, though, the hiders learned to take the ramp inside the fort with them before blocking the exits, so the seekers have no tool to use.

As OpenAI's Bowen Baker said:

"Once one team learns a new strategy, it creates this pressure for the other team to adapt. It has this really interesting analogue to how humans evolved on Earth, where you had constant competition between organisms."

The agents' development didn't even stop there. They eventually learned how to exploit glitches in their environment, such as getting rid of ramps for good by shoving them through walls at a certain angle. Bower said this suggests that artificial intelligence could find solutions for complex problems that we might not think of ourselves. "Maybe they'll even be able to solve problems that humans don't yet know how to," he explained.