LlamaCon 2025 live: Updates from Meta's first generative AI developer conference keynote

Today's event features a keynote address and a conversation between Mark Zuckerberg and Microsoft CEO Satya Nadella.

After a couple years of having its open-source Llama AI model be just a part of its Connect conferences, Meta is breaking things out and hosting an entirely generative AI-focused developer conference called LlamaCon on April 29. The event is streaming online, and you'll be able to watch along live on the Meta for Developers Facebook page.

LlamaCon kicks off today at 1:15 PM ET / 10:15 AM PT with a keynote address from Meta's Chief Product Officer Chris Cox, Vice President of AI Manohar Paluri and research scientist Angela Fan. The keynote is supposed to cover developments in the company's open-source AI community, "the latest on the Llama collection of models and tools" and offer a glimpse at yet-to-be released AI features.

The keynote address will be followed by a conversation at 10:45AM PT / 1:45PM ET between Meta CEO Mark Zuckerberg and Databricks CEO Ali Ghodsi on "building AI-powered applications," followed by a chat at 4PM PT / 7PM ET about "the latest trends in AI" between Zuckerberg and Microsoft CEO Satya Nadella. It doesn't seem like either conversation will be used to break news, but Microsoft and Meta have collaborated before, so anything is possible.

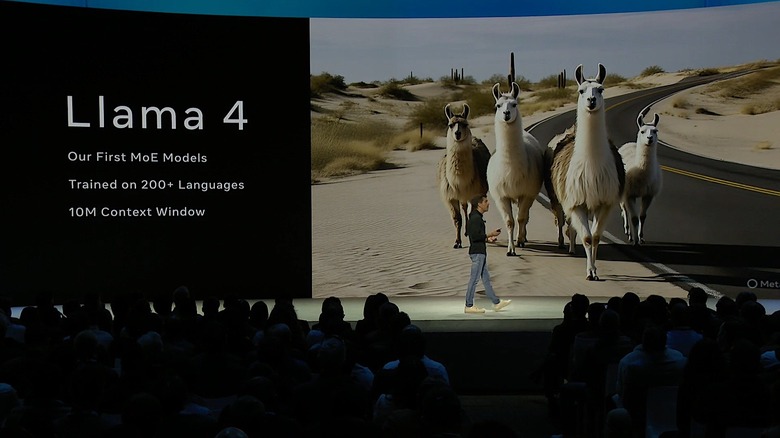

Meta hasn't traditionally waited for a conference to launch updates to Meta AI or the Llama model. The company introduced its new Llama 4 family of models, which excel at image understanding and document parsing, on a Saturday in early April. It's not clear what new models or products the company could have saved for LlamaCon.

We'll be liveblogging the keynote presentation today, along with some of the subsequent interviews and sessions between Zuckerberg and his guests. Stay tuned and refresh this article at about 10AM ET today, when we'll kick off the live updates.

93 Updates

-

That's it for our liveblog today everyone, thank you for reading. Thanks so much too, to Karissa and Igor for working so hard on such a long day, and Devindra (and everyone else on the team) for the help. We'll see you lovely folks again on another liveblog.

-

I have boarded the LlamaCon shuttle and am finally headed home. Thanks to everyone who joined us today!

-

As we wrap, we got so many Little Llama teases and yet no concrete news on the new model.

-

Sounds like we're wrapping up here. Zuckerberg asks Nadella what he's looking forward to and Satya has a very Satya answer: software's ability to solve problems.

-

"The world needs a new factor of production to meet new challenges," Nadella says of the promise AI, adding how it took 50 years for industries to adapt to the advent of electricity. "We're all investing [in AI] like it won't take 50 years," Zuck responds, laughing.

-

We're getting a bit philosophical now. Nadella is saying that lines between apps, websites and documents are starting to blur, thanks to AI. Zuck breezes over his point "interesting, makes sense."

-

As the conversation turns to agentic systems, Zuck says he doesn't know "off the top of his head" the amount of Meta's code is being written by AI. For context, late last year Google CEO Sundar Pichai said a quarter of the search giant's new code was AI generated.

-

Nadella deftly dodges the topic of Microsoft's relationship with OpenAI by looking back to the company's history and his own history with open source projects.

-

Zuckerberg mentions Microsoft's partnership with OPenAI but points out that the company has also tried to support open-source models. Nadella says both closed and open-source models are needed: "I'm not dogmatic about it," he says.

-

Wow, Zuck is laying it on thick. He referred to Microsoft as the "greatest technology company" and said Nadella has been a solid ally in the open-source space. Nadella jokes that Zuckerberg once lectured him about Bing not being social, which gets a big laugh from the audience.

-

Maybe the AI can't count?

-

In case anyone is wondering, 10 minutes ago they told us it would start in 10 minutes. But they just now gave us a 5 minute warning (and a countdown) so hopefully we're *actually* starting soon.

-

I honestly don't know what to expect. My first instinct is to say "no." On the other hand, this is an odd matchup to say the least. As I mentioned earlier, we typically think of Meta and Microsoft as competitors in the AI space, and I can't think of a time when we've seen Zuck and Satya share a stage before so it definitely feels like they want to signal... something. I'm just not sure what.

-

Honestly shocked there still appears to be a sizable audience in front of that stage. I'm chowing down on some Korean stew at home. Karissa — do we expect Zuckerberg and Nadella to say anything of note?

-

They seem to be changing the stage over for a fireside, so hopefully we will be starting soon.

No word on how late we're running, at the moment.

-

If you are all still with us at this point, welcome back and thanks for joining us this late in the day! It's 7pm here in the New York area. Definitely a choice for Meta to host this session at 4pm where they are.

-

Well, the last developer session just wrapped up and they announced a "networking break," so I guess we will be waiting at least a few more minutes for Zuckerberg and Nadella.

-

I'm back in the main keynote area, where the AC is apparently set to arctic. The prior session is still happening, so unclear if Zuckerberg's fireside will be starting on time.

-

I've certainly never had an ice cream taco and I've attended many Google, Apple, Amazon and Microsoft events (not to mention the smaller tech companies too). So kudos, Meta? And no, I didn't mean to brag :)

-

Excellent idea, Cherlynn. These were definitely above average, I'd say. Also, my first time getting an ice cream taco at a tech event.

-

Hi, I'm back just to campaign for us to have a review section dedicated to the Snacks of Tech Events. (SoTE?) How would you rate these, Karissa?

-

As we wait for Zuckerberg to return to the LlamaCon stage with Microsoft CEO Satya Nadella, I have to say I'm very curious to hear what they discuss. Microsoft is a major backer of Open AI, one of Meta's biggest competitors on the AI front so it was somewhat surprising to see him on the agenda. Interestingly, The Wall Street Journal published a story just yesterday reporting that Nadella and Open AI CEO Sam Altman have been "drifting apart" of late. The report notes several areas of tension between the two, including disagreements over OpenAI's access to Microsoft's compute resources. Given those reported tensions, I'll be paying extra close attention to Nadella and Zuckerberg's interactions.

-

One thing I'll say about Meta: they always keep visitors well-stocked when it comes to snacks. The afternoon break featured a soft pretzel bar (with a variety of dipping sauces to choose from) and mini ice cream tacos in four flavors.

Meta understands the best way to keep a crowd going: sugar and carbs.

-

In another bit of interesting timing, Meta is reporting earnings tomorrow for Q1 2025. Expect Zuck to talk more about all things Meta AI and Llama. (He's also likely to get some questions about whats happening over at Reality Labs, which was just hit with another round of layoffs.)

-

A lot of today's updates have been pretty far in the weeds, but today is also highlighting how ubiquitous Llama already is. Earlier, we heard some examples from a Spotify engineer about how the company uses Meta's models for features like AI playlists and its AI DJ. Karim Liman-Tinguiri, senior staff machine learning engineer at Spotify, explained that using a fine-tuned version of Llama helps the company keep costs and latency down. It also allows them to continually update their recommendations for users. For example, he said that if you create an AI playlist, like "jazz music for a roadtrip," Spotify tracks how you interact with those songs and then feeds that data back into its model for better personalization in the future. (Spotify, by thew way, didn't always use Meta's AI models. When AI DJ first launched in 2023, the company used models from Open AI, but apparently has since switched over to Llama.)

-

I've been covering Meta for more than a decade, and been to many, many events they've hosted over the years. One thing that's stood out to me today is how low-key LlamaCon is compared to other big events like Connect (or F8 back in the day). LlamaCon is smaller than either of those ever were, but it also has a much more relaxed vibe overall. There are just a handful of sessions for developers this afternoon, and lots of space for them to kick back and relax. Of course, Meta has really big ambitions when it comes to AI and Llama, which does make me wonder if I'll be attending a much bigger LlamaCon at some point in the future.

Meta's lighting game is on-point.

-

Meta also made some other announcements at its inaugural LlamaCon, and you can read the details in the company's own words in the blog post it published today.

-

I'm in a session with Meta Director of Product, Dom Divakaruni, and he dropped another hint about the lightweight Llama 4 model Zuckerberg teased earlier today (the one he called "Little Llama"). Divakaruni said there would be a successor to the popular lightweight Llama 3 model coming "soon."

-

Meta has a plan to bring AI to WhatsApp chats without breaking privacy

(Photo Illustration by Thomas Fuller/SOPA Images/LightRocket via Getty Images)

One piece of news that didn't get mentioned during the keynote today was something coming to WhatsApp called "Private Processing." According Meta, this is an "optional capability" that will let you use AI tools like summarizing messages while still keeping your conversations private.

Karissa writes:

"Buried in its LlamaCon updates, the company shared that it's working on something called "Private Processing," which will allow users to take advantage of generative AI capabilities within WhatsApp without eroding its privacy features.

WhatsApp, of course, is known for its strong privacy protections and end-to-end encryption. That would seem incompatible with cloud-based AI features like Meta AI. But Private Processing will essentially allow Meta to do both."

Read more: Meta's "Private Processing" could bring AI to WhatsApp chats without breaking privacy

-

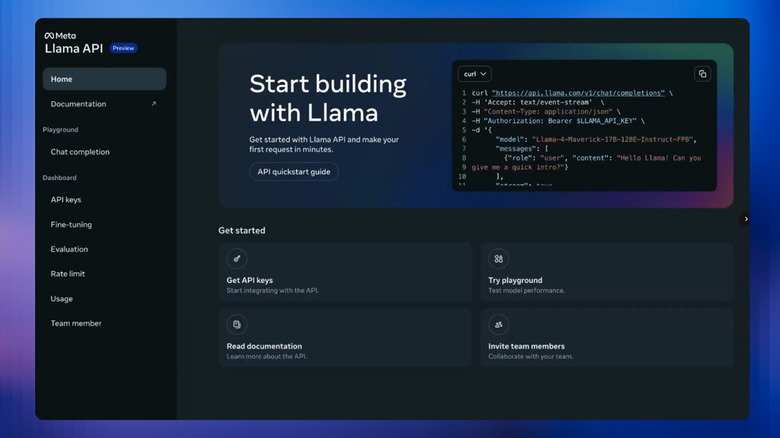

Meta is making its Llama API available for preview today

Llama API screenshot

During the keynote, Meta announced the new Llama API would be available as a limited free preview from today. It's meant to give developers a way to experiment with Meta's AI models.

Per Igor:

"The initial release of the Llama API includes tools devs can use to fine-tune and evaluate their apps.

Additionally, Meta notes it won't use user prompts and model responses to train its own models. "When you're ready, the models you build on the Llama API are yours to take with you wherever you want to host them, and we don't keep them locked on our servers," the company said. Meta expects to roll out the tool to more users in coming weeks and months."

Read more: Meta is making it easier to use Llama models for app development

-

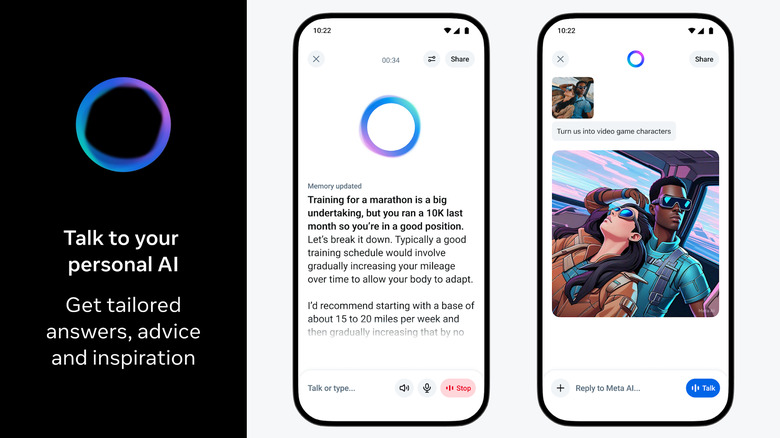

Meta's ChatGPT competitor includes conversational voice chat and a social feed

Three screens showing the Meta AI app.

In case you missed it, Meta did share some news during LlamaCon. Actually, it made some announcements right before the LlamaCon keynote started today. The company unveiled the Meta AI app this morning, calling it an "assistant that gets to know your preferences, remembers context and is personalized to you." It has already taken over as the companion app for Meta's AI glasses and includes a Discover feed where people can share how they're using the assistant.

According to our Will Shanklin:

"For users in the US and Canada, Meta AI can personalize its answers based on data you've shared with Meta products. This includes info like your social profile and content you like or engage with. The company says linking your Facebook and Instagram accounts to the same Meta AI account will provide "an even stronger personalized experience." If you don't want that, this might be a good time to check your privacy settings."

Read more: The new Meta AI app is a chatGPT competitor

-

I hate to bring us back down this rabbit hole but my reverse image search confirmed it is definitely not an Omega Seamaster Planet Ocean nor is it any number of Breitling, Citizen or Blancpain models that look similar. The most expensive of these I found was $12,000 (pre-owned), though. I will say my first thought was that it might be a Louis Vuitton Tambour Horizon. There's also a Tambour Street Diver model that looks very similar.

-

Oh we don't talk about the dot-com crash when we're in another bubble, Karissa

-

Alright, that's it for now. We're breaking for lunch, but will be back with more updates from LlamaCon, including another fireside with Microsoft CEO Satya Nadella to close things out.

-

A (probably inadvertently) amusing moment just now. Ghodsi is talking about how exciting things are in the AI world right now, and compares it to the early days of the internet around the dot com boom in 2000. He says we're at a similar point now in AI. What he doesn't mention, though, is that there was also a major crash in 2000 when a lot of early internet businesses went under!

-

OK... maybe I underestimated how much he spends on watches. I do not, for the tape, make this much money per year. And yes, he's a true everyman.

-

Ah yes, that makes sense Aaron. A man of the people!

-

Since we're in a bit of a lull, jumping in to contribute that Zuck has an obscene and well-documented (among the fashion blogs, at least) collection of Swiss watches. He seems to spend roughly my annual salary on a new watch each week.

-

This could have been a podcast, Zuck.

-

Zuck keeps dancing around reasoning models. Meta has yet to release their own chain-of-thought model. It doesn't seem like the company will announce anything new on that front today, though Ghodsi just said "We'd love to see it. Nudge, nudge."

-

This conversation is trending like it will be longer than the actual keynote. It's also ... a little dry. Zuckerberg and Ghodsi are obviously chummy, but we're not getting a ton of new information here (Zuckerberg's accessories notwithstanding.)

-

I did my best "zoom and enhance" and my best guess is that Zuckerberg is wearing a "dumb" watch. If any aficionados are reading this, let us know what you think it might be! There's at least one sub-dial that I can make out. Yep, I'm here for the most important reporting.

-

Interesting, they are now discussing DeepSeek. Zuckerberg notes that developers are able to "mix and match" models, while Ghodsi notes that Databricks saw a lot of people using Llama on top of DeepSeek.

-

For what it's worth, even the viewers commenting on the YouTube livestream are wondering if Zuckerberg is going to announce any actual new models here.

-

Mark Zuckerberg and Databricks CEO Ali Ghodsi.

I unfortunately don't have a great angle here, but you can see a bright orange band.

-

I need Llama AI to analyze and detect what massive watch Zuck is wearing right now. Karissa points out he wouldn't wear an Apple Watch, so...

-

Not much to say here other than the fact that Zuck teased the company is working on a "Little Llama" model.

-

Zuckerberg and Ghodsi are talking about how the smaller Llama models have been the most popular among developers. Zuck mentions there is a smaller Llama 4 model in the works that is apparently known as "Little Llama" internally. No word on when that might drop.

-

Databricks' CEO is talking about how great it is that Llama is open-source, giving some examples of enterprise uses that wouldn't be possible otherwise, he also says that Llama helps them keep prices down for customers.

-

Databricks, for the uninitiated, provides software tooling companies can use to build their own machine learning applications.

-

Rate those fits!

Meta CEO Mark Zuckerberg and Databricks CEO Ali Ghodsi.

-

Zuckerberg is wearing a baggy white polo and what appears to be a pair of Ray-Ban Meta smart glasses (no Roman Empire-inspired fashion today).

-

Alright, that's a wrap on the keynote. I have to say, I'm not used to a short tech keynote, but I'm not mad about it either! I think it clocked in at just about 30 minutes? Next up, Mark Zuckerberg will come onstage with Databricks CEO Ali Ghodsi.

-

We have just a short break before Zuck and Databricks CEO Ali Ghodsi take the stage to talk about a new partnership.

-

Cox is back onstage after that Llama API demo and diving into some of Meta's recent AI work. He's running through research that powers creative tools within Meta's new Edits app, but much of what he's describing could translate to other video apps as well.

-

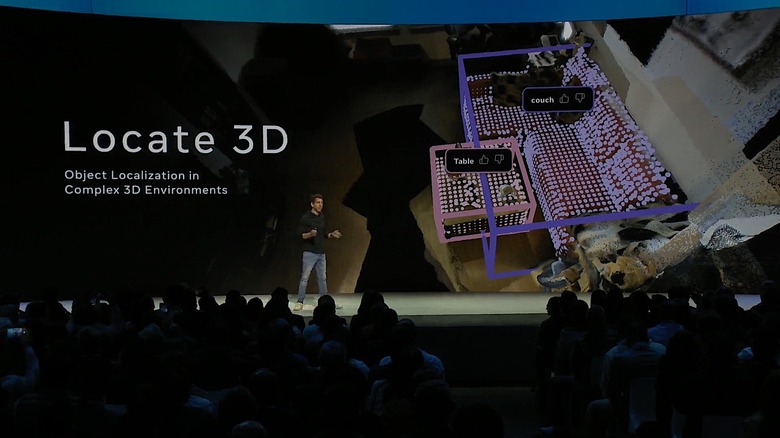

Meta CPO Chris Cox shows off Locate 3D, one of the company's AI-powered solution for detecting objects in 3D environments.

-

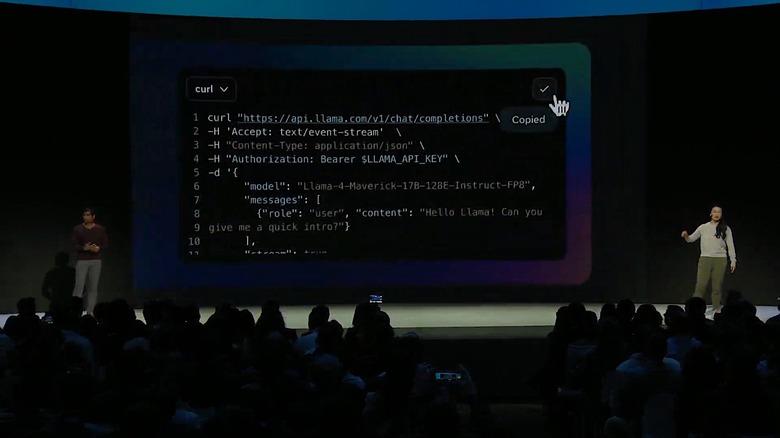

Main takeaway here is that Meta is giving developers a lot of freedom to decide how they want to use its models. Most notably, developers who use Llama API don't need to host their applications on the company's servers.

-

The folks speaking are Vice President of AI Manohar Paluri and research scientist Angela Fan. Here they're showing off the basics of Llama's API.

Meta Llama API

-

Meta is making it easier to use Llama models for app development

Llama API screenshot

Meta is releasing a new tool it hopes will encourage developers to use its family of Llama models for their next project. At its inaugural LlamaCon event in Menlo Park on Tuesday, the company announced the Llama API. Available as a limited free preview starting today, the tool gives developers a place to experiment with Meta's AI models, including the recently released Llama 4 Scout and Maverick systems. It also makes it easy to create new API keys, which devs can use for authentication purposes.

Read more: Meta is making it easier to use Llama models for app development

-

For those who want to read more about Llama API, I just published a story about it.

-

Cox just teased the future "dot" releases for Llama 4, saying they will add even more functionality for developers. No details on when we can expect those to drop, though

-

Farmer.Chat is an example of an AI application we don't see nearly often enough. It's nice to see a group of developers use the power of machine learning to assist those who don't typically benefit from these types of technologies.

-

Yah that's a good point Karissa, and I think it's a problem all of the GenAI tools face. Microsoft seems desperate to put Copilot AI everywhere, but when I ask normal users about it they have no clue why they would use it.

-

We get it, you like llamas.

Llama 4 models

-

Cox just made an interesting point: A lot of people don't really know what to do with Meta AI or aren't sure what it can actually do. He says that's why Meta created a somewhat social "discover" feed for the Meta AI app, but I think it also underscores a bigger issue for Meta: a lot of its users just aren't used to the idea of using a Meta app as an assistant.

-

I'll note again a lot of what Cox is showing off here with the Meta AI app is already present in competing chatbots. OpenAI's Sora, for example, includes a discovery feed that allows users to see the prompts people used to generate their videos.

-

Igor, you would be surprised!

-

A comparison of Llama vs other AI solutions

-

Hmm. I would hope people could remember their partner's birthday without the need for an AI assistant to remind them of it.

-

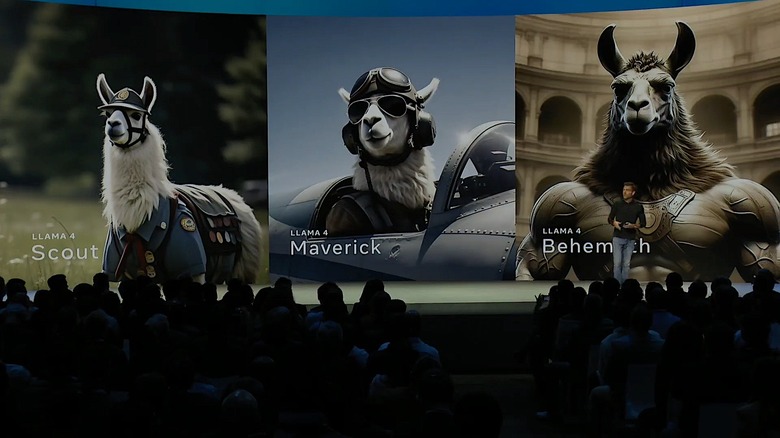

Meta is going heavy on the llama imagery, I guess that's to be expected. We just saw a hulked out llama to represent the "Behemoth" model, which has yet to be released. And turns out Cox is giving a nod to Meta's new standalone AI app that was announced earlier this morning.

-

Meta CPO Chris Cox.

-

Meta Chief Product Officer Chris Cox at LlamaCon

-

There's plenty of criticism about calling Llama an open source project, to be clear. Meta hasn't always made its models freely available like most open source projects.

-

With Cox kicking things off, we get an obligatory photo of the early Facebook days.

-

Meta's Chief Product Officer Chris Cox is on the stage in front of what appears to be a Meta AI-generated image of a llama, of course. He's opening by talking about the initial skepticism around Meta's AI plans a couple years ago and tying it back to Meta's roots (implying that Meta was somewhat of an underdog).

-

And it looks like we're finally underway.

-

We're a few minutes away from the keynote's kickoff, it's interesting that there are only 867 people watching the YouTube stream. Most big tech events hit thousands of viewers before they start.

-

I have made it into the keynote room as we're about 10 minutes out. It's a smaller crowd than what we're used to seeing at events like Connect, but still pretty lively.

The LlamaCon stage.

-

And if you've been following along folks, this keynote was supposed to start at 1PM ET. The livestream countdown is the first time I saw that it was bumped by 15 minutes. That's not unusual for Meta keynotes though, Zuck in particular always seems a bit late to these things.

-

That's a great question, Dev. I don't know if Zuck will say anything. Speaking of controversy, I was at my local bookstore this past weekend and the staff said they couldn't keep "Careless People," the new Facebook memoir from Sarah Wynn-Williams you recently wrote about, in stock. That was not something I expected. I thought everything Williams wrote about in the book was old news.

-

The countdown has started. We got about 25 minutes before the keynote gets underway.

-

I wonder if Zuck is going to say anything about the WSJ's report on its naughty AI chatbots, which reportedly engaged in sexual conversations with minors. This is the guy who said he's done apologizing for Facebook and Meta's misdeeds, so I wouldn't bet on it.

-

Fun fact: I just noticed the thumbnail Meta uploaded for its YouTube livestream has an image of the Quest 3. Last week, the company laid more than 100 employees from its Reality Labs division. You may recall the company rebranded itself as Meta because the "Metaverse" was supposed to be its future.

-

As for me, I just sat down with a fresh glass of water. Today's keynote is scheduled to run for about 45 minutes, so if nothing else, expect a brisk pace. Later, there will be panel conversations, with Meta CEO Mark Zuckerberg involved in the first one right at 10:45AM PT.

-

Hello! I can confirm I've made it inside. I'm in a small press room while we wait for them to bring us into the keynote area. And yes, Cherlynn, they have some breakfast here for us!

I'm here!

-

Karissa reports that she's onsite and we'll hopefully have some pictures from the ground soon! I hope the snacks are... Good? Though I may get FOMO.

-

I'm still en route (Bay Area traffic is nothing if not reliable) but it's interesting timing from Meta with this announcement. Mark Zuckerberg also shared this morning that Meta AI now has "almost a billion" monthly users (I'd bet they were hoping they'd pass that milestone in time for LlamaCon). I'm told today will be very developer-focused, so we may not hear much about Meta AI, but I bet we'll hear more about Llama adoption.

-

It looks like Meta is getting an early start on today. It just announced a rebranding of the Meta View app. It's now called Meta AI app. The software will still work with the company's Ray-Ban AR glasses while offering new features. Essentially, it looks like Meta's take on OpenAI's own app. There's even a feature exactly like ChatGPT's Advanced Voice Mode.

-

I'd love to see Zuck come riding in on a llama for sure, Igor. This event is a bit weirdly timed, since Meta just announced its Llama 4 models. The "Scout" and "Maverick" models are already here and running in Meta's apps, including Whatsapp and Messenger, but we're still waiting to hear about "Behemoth." Zuckerberg claims it's "the highest performing base model in the world." We'll see! All of the AI companies are eager to top each other these days, it's hard to know what to believe.

-

It's great to get another opportunity to live blog. I had a great time with Dev trying to make sense of all of NVIDIA's GTC announcements. Karissa, any predictions for today's event? More importantly, will we see a real 🦙?

-

Good morning! I'm en route to Meta HQ where the first-ever LlamaCon will be kicking off with a keynote from Chief Product Officer Chris Cox. I'm expecting lots of developer updates and, hopefully, a peek at what Meta has in store for the rest of this year.

This is the first time Meta has hosted a developer event solely dedicated to generative AI. It's also a year in which Mark Zuckerberg has said the company's AI investments could climb as high as $65 billion as the company competes for AI dominance. Today is Meta's big chance to speak directly to developers about its vision for AI.

-

On our liveblog today will be AI reporter Igor Bonifacic and senior reporter Karissa Bell, who's covering the event live from Meta's headquarters. You'll possibly see myself and senior editor Devindra Hardawar chime in from time to time, as we help with this liveblog behind the scenes.

-

Hello everyone and welcome to Engadget's liveblog of Meta's Llamacon developer conference. We know, it's an odd event to liveblog. This is the inaugural Llamacon, and given it's the first, it's hard to predict what might happen today. We do know it's going to revolve around the company's Llama language and reasoning models, though, and that it's developer-centric.

Update, April 29 2025, 12:45PM ET: This story was updated to reflect the new 1:15PM ET keynote start time.

Update, April 29 2025, 6:00AM ET: This story was updated to include the details of Engadget's liveblog, and correct a few typos in timezones.