YouTube will remove videos disputing the 2020 presidential election

YouTube is clamping down now that states have certified results.

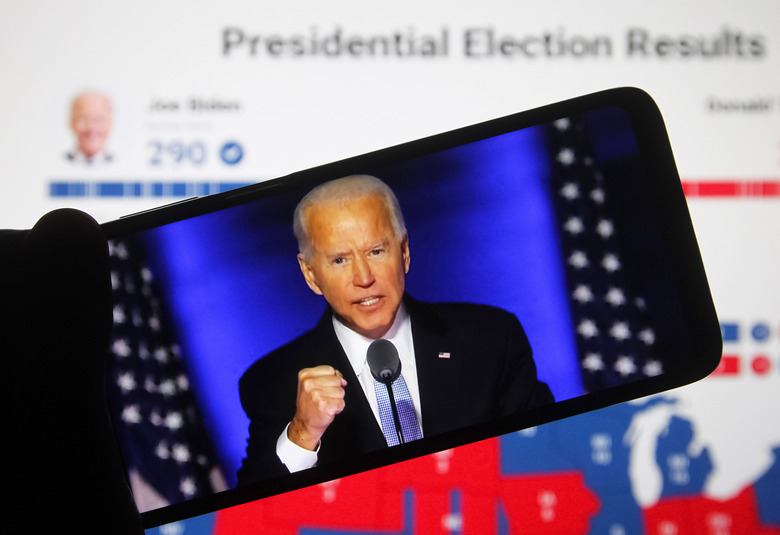

YouTube's determination to curb election misinformation is extending to disputes over the results. The Google-owned service has started removing content uploaded from December 9th onward if it alleges that "widespread fraud or errors" altered the outcome of the 2020 US presidential election. States have certified the results, YouTube said, and December 8th was the safe harbor deadline for the election — Joe Biden is the President-elect as far as the government is concerned, and the video site is acting accordingly.

The company is matching this by updating its election fact check panels with a link to the Office of the Federal Register page confirming Biden's win. There will still be a link to the Cybersecurity and Infrastructure Security Agency's page debunking false election integrity claims.

YouTube told Engadget it would rely on its information panels to challenge claims in content posted before December 9th. In its blog post detailing the latest moves, YouTube said it already forbade content claiming widespread fraud in previous US presidential elections and allowed "controversial views" on vote counting while the tallies were still in progress.

The company further claimed that its anti-misinformation efforts during the election were effective. It banned over 8,000 channels and "thousands" of videos for "harmful and misleading" claims since September, with more than 77 percent of videos pulled before they had 100 views. Fact check panels have activated over 200,000 times since the November 3rd election, YouTube added.

The policy won't please those insisting the election was fraudulent. President Trump in particular is known for retaliating against internet giants that fact check his claims, including for election results. However, YouTube clearly feels it has a solid defense — it's pointing to official information it doesn't expect to change by the January 20th inauguration.