How I turned my Xbox's Kinect into a wondrous motion-capture device

When Microsoft started selling a basic Xbox One package without a Kinect V2 for $100 less, the result was unequivocal: Sales took off. Most gamers can take or leave the ubiquitous depth camera, because it just isn't as useful for gaming as, say, the Wii controller. It is indispensable for certain titles, like Just Dance 2014, Xbox Fitness and Fighter Within. Others, such as Madden NFL 25 and Battlefield 4, can make use of the Kinect 2, but absolutely don't need it. In other words, it's a big bag of meh for gamers and casual users. But recently, my ears perked up when Microsoft released a $50 cable that lets you use the Xbox One's Kinect on a PC.

Though you can buy Kinect for Windows V2 for $200, it would be a shame to do that if you already had an Xbox One Kinect. Luckily, Microsoft launched the Kinect Adapter for Windows, which connects the Xbox One's wonky Kinect V2 port to good ol' USB 3.0. From there, it works exactly the same as the Kinect for Windows V2 sensor and ends up costing less, if you set aside the necessary Xbox One purchase. The device is still mostly aimed at developers, and requires that you install the Kinect SDK 2.0 before you can use it, but out of the box, it does come with demos that tease its potential.

And potential is all it is right now. Futuristic Microsoft Research demos like IllumiRoom (now called RoomAlive) made techies and gamers drool, but as a product, it's far in the future. Microsoft's free apps for the depth sensor — Kinect Studio and Kinect Evolution — are also just cool developer aids at the moment. Microsoft's only free app that really lets you do something on Windows is 3D Builder. It's mostly aimed at 3D printer users, but allows you to create printable objects (like yourself) by scanning them with a Kinect 2. It's actually pretty fun to make a virtual 3D model of your household objects (or cat), but the process is still pretty clunky, even on a really fast PC. My own results (below) were dismal, and a scan of my monitor looked like a nuclear accident — but I might do better with more practice.

What about third-party apps? The news is better there, provided you're into certain niches. Most of the apps target kids, educators and researchers. For instance, Fusion4D uses the Kinect to let you interact with virtual objects, much like Sony's PS4 Playroom demo. It's aimed at educators and works with biology, engineering and other models. Another example is YAKiT, a Kinect 2 app that puts your face onto people in photos. It was fun for a while, but it got tired fast.

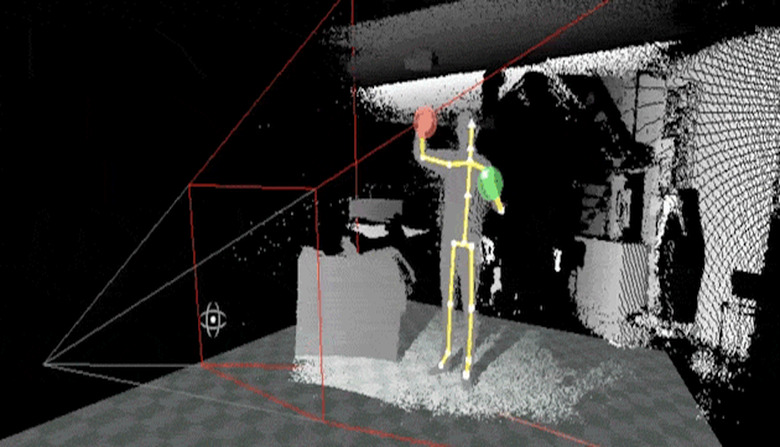

Where the Kinect does shine is in motion capture (mocap). I tested both facial and body mocap apps from a company called Brekel, and the results were impressive. (Another mocap dev that exploits the Kinect 2 is Ipi Soft, which supports multiple sensors.) The app, plus a Kinect 2, will never beat a full-blown studio, of course. But if you stay within its limitations and keep square to the depth sensor, you can capture full body performances for games or animated shows. After a bit of cleanup, the results were very usable. And the facial-capture app was even better. Up until recently, software that could capture facial emotions and lip sync was very expensive, but Brekel's solution seemed to work very well, at least in limited testing.

Modeling was more of a mixed bag. I tried another program called Body Snap, which is supposed to generate a scan of your body and face using Kinect 2 sensor info. You can then export the model to software like Mixamo, Autodesk's Mudbox or 3ds Max to fine-tune and accessorize it with hairstyles, clothing and shoes. Unfortunately, the model didn't much resemble me; I've had better luck in the past using products like FaceGen along with DAZ 3D to create avatars. But Body Snap is still in the beta stages, and is loaded with potential if its creator can improve it.

The Kinect v2 still hasn't lived up to its potential — either on the Xbox One or Windows — but I hope Microsoft Research won't stop trying. If it can figure out how to let players interact with games better sans controllers, it may save us from sitting passively on the couch. And I'd love to see some IllumiRoom-style tech come to be, if Microsoft can figure out how to do it without using expensive projectors. Right now, we're starting to see its vast potential for scanning, VR and especially animation. Meanwhile, it's ironic that the devices might be better at helping make games through mocap than helping folks play them.