AI film editor can cut scenes in seconds to suit your style

It can speed up the pace, intensify emotion or just go plain weird.

AI has won at Go and done a few other cool things, but so far it's been mighty unimpressive at harder tasks like customers service, Twitter engagement and script writing. However, a new algorithm from researchers at Stanford and Adobe has shown it's pretty damn good at video dialogue editing, something that requires artistry, skill and considerable time. The bot not only removes the drudgery, but can edit clips using multiple film styles to suit the project.

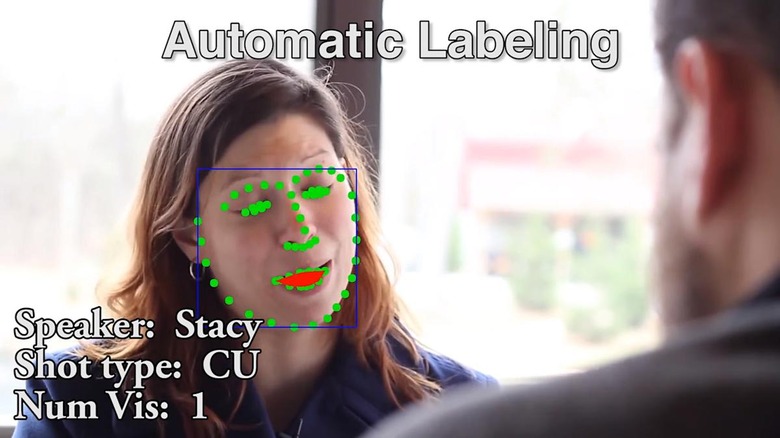

First of all, the system can organize "takes" and match them to lines of dialogue from the script. It can also do voice, face and emotion recognition to encode the type of shot, intensity of the actor's feelings, camera framing and other things. Since directors can shoot up to 10 takes per scene (or way more, in the case of auteurs like Stanley Kubrick), that alone can save hours.

However, the real power of the system is doing "idiom" editing based on the rules of film language. For instance, many scenes start with a wide "establishing" shot so that the viewer knows where they are. You can also use leisurely or fast pacing, emphasize a certain character, intensify emotions or keep shot types (like wide or closeup) consistent. Such idioms are generally used to best tell the story in the way the director intended.

All the editor has to do is drop their preferred idioms into the system, and it will cut the scene to match automatically, following the script. In an example shown (below), the team selected "start wide" to establish the scene, "avoid jump cuts" for a cinematic (non-YouTube) style, "emphasize character" ("Stacey") and use a faster-paced performance.

The system instantly created a cut that was pretty darn watchable, closely hewing to the comedic style that the script was going for. The team then shuffled the idioms, and it generated a "YouTube" style that emphasized hyperactive pacing and jump cuts.

What's best (or worst, perhaps for professional editors) is that the algorithm was able to assemble the 71-second cut within two to three seconds and switch to a completely different style instantly. Meanwhile, it took an editor three hours to cut the same sequence by hand, counting the time it took to watch each take.

The system only works for dialogue, and not action or other types of sequences. It also has no way to judge the quality of the performance, naturalism and emotional beats in take. Editors, producers and directors still have to examine all the video that was shot, so AI is not going to take those jobs away anytime soon. However it looks like it's about ready to replace the assistant editors who organize all the materials, or at least do a good chunk of their work.

More importantly, it could remove a lot of the slogging normally required to edit, and let an editor see some quick cuts based on different styles. That would leave more time for fine-tuning, where their skill and artistic talent are most crucial.