Microsoft's AI cone recognizes faces and voices during meetings

The sensor will automatically transcribe and translate meeting notes.

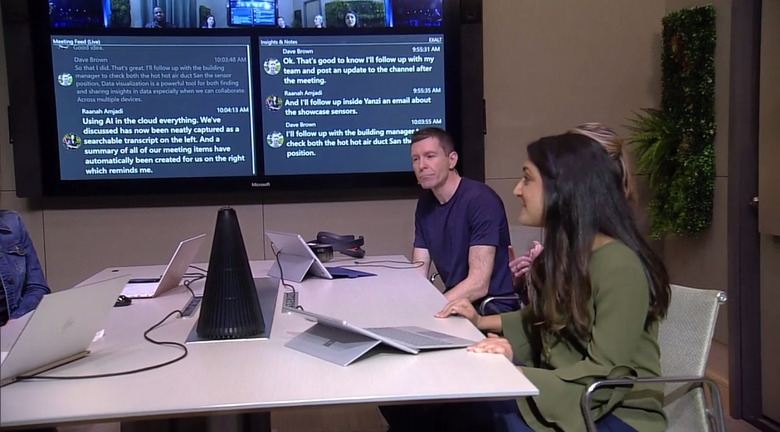

Microsoft has a new tool for making meetings easier. It recognizes speech patterns, automatically transcribing them for remote participants (capable of "multiple" simultaneous translations) in addition to visually recognizing meeting participants as they walk into the room. And because the black, conical speaker is always listening, it means meeting notes are transcribed automatically.

More than that, the device fully syncs with Cortana for everything from finding a specific meeting room — during a stage demo a room with a Surface Hub was requested — to finding times when everyone invited has a clear schedule.

Sure, the demo was staged and awkward in a way that these sorts of things usually are, but it was promising, especially for meetings involving people spread across different locations. What was impressive is that people who were hearing impaired, or were speaking entirely different languages were able to keep up with everyone else thanks to the "invisible" tech at play. Of course, how this will work in the real world is another matter entirely, but from the stage at least, the demo took a lot of the frustrations out of teleconferencing.

Click here to catch up on the latest news from Microsoft Build 2018!