Apple is delaying its child safety features

Privacy advocates criticized the CSAM detection tools, which were supposed to arrive alongside the iOS 15 update.

Apple says it's delaying the rollout of Child Sexual Abuse Material (CSAM) detection tools "to make improvements" following pushback from critics. The features include one that analyzes iCloud Photos for known CSAM, which has caused concern among privacy advocates.

"Last month we announced plans for features intended to help protect children from predators who use communication tools to recruit and exploit them, and limit the spread of Child Sexual Abuse Material," Apple told 9to5Mac in a statement. "Based on feedback from customers, advocacy groups, researchers and others, we have decided to take additional time over the coming months to collect input and make improvements before releasing these critically important child safety features."

Apple planned to roll out the CSAM detection systems as part of upcoming OS updates, namely iOS 15, iPadOS 15, watchOS 8 and macOS Monterey. The company is expected to release those in the coming weeks. Apple didn't go into detail about the improvements it might make. Engadget has contacted the company for comment.

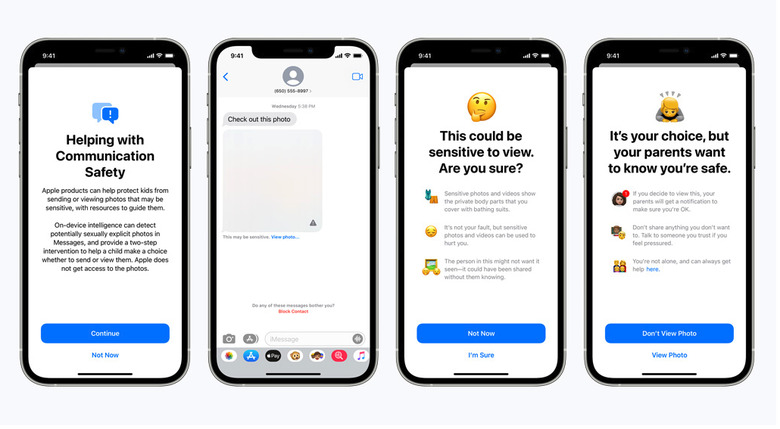

The planned features included one for Messages, which would notify children and their parents when Apple detects that sexually explicit photos were being shared in the app using on-device machine learning systems. Such images sent to children would be blurred and include warnings. Siri and the built-in search functions on iOS and macOS will direct point users to appropriate resources when someone asks how to report CSAM or tries to carry out CSAM-related searches.

The iCloud Photos tool is perhaps the most controversial of the CSAM detection features Apple announced. It plans to use an on-device system to match photos against a database of known CSAM image hashes (a kind of digital fingerprint for such images) maintained by the National Center for Missing and Exploited Children and other organizations. This analysis is supposed to take place before an image is uploaded to iCloud Photos. Were the system to detect CSAM and human reviewers manually confirmed a match, Apple would disable the person's account and send a report to NCMEC.

Apple claimed the approach would provide "privacy benefits over existing techniques since Apple only learns about users' photos if they have a collection of known CSAM in their iCloud Photos account." However, privacy advocates have been up in arms about the planned move.

Some suggest that CSAM photo scanning could lead to law enforcement or governments pushing Apple to look for other types of images to perhaps, for instance, clamp down on dissidents. Two Princeton University researchers who say they built a similar system called the tech "dangerous." They wrote that, "Our system could be easily repurposed for surveillance and censorship. The design wasn't restricted to a specific category of content; a service could simply swap in any content-matching database, and the person using that service would be none the wiser."

Critics also called out Apple for apparently going against its long-held stance of upholding user privacy. It famously refused to unlock the iPhone used by the 2016 San Bernardino shooter, kicking off a legal battle with the FBI.

Apple said in mid-August that poor communication led to confusion about the features, which it announced just over a week beforehand. The company's senior vice president of software engineering Craig Federighi noted the image scanning system has "multiple levels of auditability." Even so, Apple's rethinking its approach. It hasn't announced a new timeline for rolling out the features.