SwiftKey's latest keyboard is powered by a neural network

A new SwiftKey keyboard hopes to serve you better typing suggestions by utilizing a miniaturized neural network. SwiftKey Neural does away with the company's tried-and-tested prediction engine in favor of a method that mimics the way the brain processes information. It's a model that's typically deployed on a grand scale for things like spam and phishing prevention in Gmail or image recognition, but very recent advancements have seen neural networks creep into phones through Google Translate, which uses one for offline text recognition. According to SwiftKey, this is the first time it's been used on a phone keyboard.

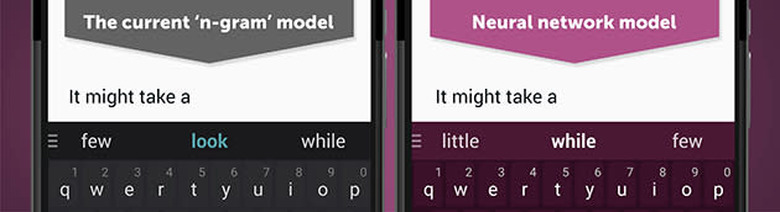

To grasp how the new system works, we need to understand the old one. SwiftKey currently uses a probability-based language algorithm based on the n-gram model for predictions. There are some additional layers of learning on top of it, which is part of what makes SwiftKey so popular, but the basic implementation reads the last two words in a sentence, looks through a large database, and spits out what it deduces is the most likely word to follow. The two-word limit is a constraint of the n-gram model, and seriously hampers predictions. (Reading back three or four words would be very hard to implement with n-gram, as it would require a far larger database which would in turn be harder for the app to search).

The neural model approaches predictions from a different angle. SwiftKey trained the network with millions of sample sentences, and now each word is represented by a piece of code. This allows the app to better understand sentences in a number of ways. Words that can be used in the same way share similar code. As you'd expect, "Meet" is marked as similar to synonymous words like "met" and "connect." Less obvious is the link with "speak" or "chat," which mean something completely different but linguistically will slide into many of the same places. The same goes for days of the week, months, or any other word or concept really — one word can share similar code with thousands of others.

Because the model looks at entire sentences, it's able to sequence together words as code to find more accurate suggestions. Going back to the "meet" example, let's take a look at the sentence fragment "Meet you at the." Using n-gram, SwiftKey typically looked at "at the," and served you three suggestions: "moment," "end" and "same." Using the neural model, it looks as the sentence as a whole and services you "airport," "hotel" and "office."

SwiftKey Neural is Android-only and still in alpha, for now. It's part of the company's Greenhouse program, which it uses as a launchpad for new ideas that may find their way into the regular app. It's well worth checking out, but there are, as you'd expect, a few caveats to being on the cutting-edge of keyboard technology.

One of the things that draws users to SwiftKey is its ability to learn your typing style. The regular app can (if you allow it to) scan your emails and social networks for clues, and then monitor your usage of the keyboard itself to improve suggestions. It does this by editing or adding to the language database that the n-gram model uses. Because Neural taps into a different type of database, this personalization isn't available in the alpha. That doesn't mean it won't ever be there — neural networks are a type of machine learning, after all — but for now, it's not on the to-do list.

"The sooner you can get an idea out of the lab and into the public, the quicker you get feedback and the more useful it becomes," Joe Braidwood, Chief Marketing Officer at SwiftKey, told Engadget, explaining the reasoning for releasing Neural as a separate app. There's also the question of resources. Neural is a relatively small 25MB download, but it requires more power than the current SwiftKey, using your phone's GPU to run the math. Braidwood says it runs with "no perceivable lag" on even modestly-specced smartphones, but there's likely more optimization to be done before this is ready to replace the regular app.

Caveats aside, SwiftKey's achievement here is impressive. As mentioned, neural networks are more typically found in giant server farms than on your smartphone, but with two apps released in just a few months, small-scale, focused applications of the tech seem to now be feasible. "We're pretty sure that the future of mobile typing is going to use neural networks," Braidwood explained. "Language is such a human thing that if you can build things that think more like humans than computers you're inevitably going to make a more useful keyboard."