Google and Elon Musk open their AI platforms to researchers

DeepMind Lab and OpenAI's Universe give scientists a way to test their AI "agents."

Artificial intelligence got a big push today as both Google and OpenAI announced plans to open-source their deep learning code. Elon Musk's OpenAI released Universe, a software platform that "lets us train a single [AI] agent on any task a human can complete with a computer." At the same time, Google parent Alphabet is putting its entire DeepMind Lab training environment codebase on GitHub, helping anyone train their own AI systems.

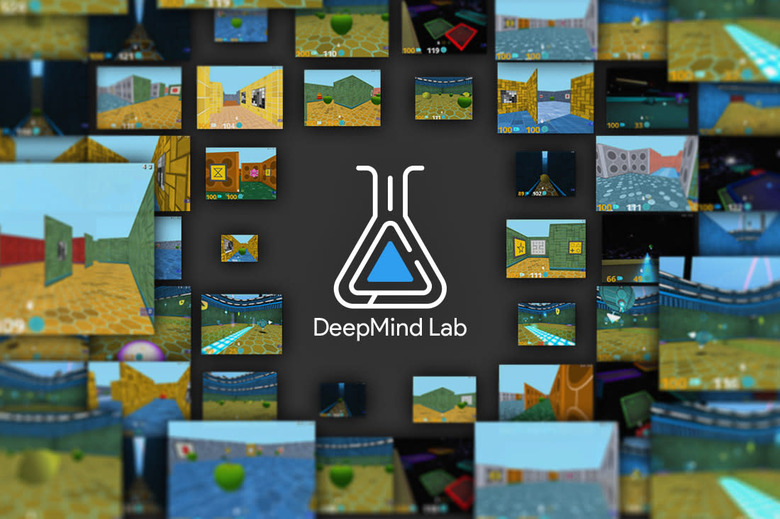

DeepMind first burrowed into the public consciousness by defeating a world champion at the notoriously difficult game Go. However, to advance deep learning further, Alphabet says that such AI "agents" require highly detailed environments to serve as laboratories for AI research. The company is now open-sourcing that environment, called DeepMind Lab, to any programmers that want to use it.

"DeepMind Lab is a fully 3D game-like platform tailored for agent-based AI research," Alphabet said in a blog. The agent floats around the environment, levitating and moving via thrusters, with a virtual camera that can track around its "body." Google describes some of the chores it can do:

Example tasks include collecting fruit, navigating in mazes, traversing dangerous passages while avoiding falling off cliffs, bouncing through space using launch pads to move between platforms, playing laser tag, and quickly learning and remembering random procedurally generated environments.

The first-person 3D environment, Alphabet explains, should make for smarter AI. "If you or I had grown up in a world that looked like Space Invaders or Pac-Man, it doesn't seem likely we would have achieved much general intelligence."

An example of a the kind of decision-making is shown in the video below, where an agent foregoes tasty apples in favor of a bitter lemon, in order to get the ultimate reward (melon). (As a more specific example, Alphabet recently announced a partnership with Activision Blizzard, letting AI researchers attempt to build an AI agent that can master Starcraft II.)

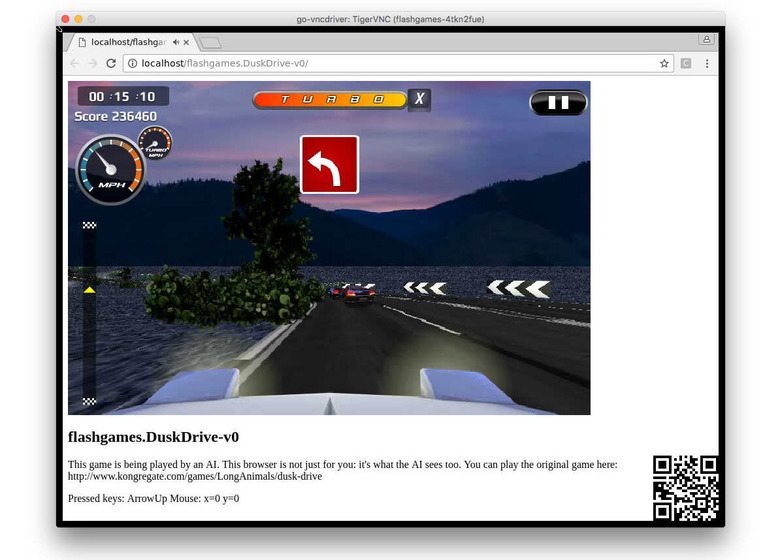

It's not likely coincidental that OpenAI, spearheaded by Elon Musk, happens to be releasing a very similar platform called Universe at the same time. Like DeepMind Lab, the idea is to give researchers a way to test and train their agents. OpenAI's aim is pretty ambitious — the release consists of "a thousand environments including Flash games, browser tasks and games like slither.io and GTA V." In its blog, the group expresses its aim:

Our goal is to develop a single AI agent that can flexibly apply its past experience on Universe environments to quickly master unfamiliar, difficult environments, which would be a major step towards general intelligence.

OpenAI says that deep learning systems are too specialized: "[DeepMind's] AlphaGo can easily defeat you at Go, but you can't explain the rules of a different board game to it and expect it to play with you." As such, it's using Universe to allow AI to run a lot of different types of tasks, "so they can develop world knowledge and problem solving strategies that can be efficiently reused in a new task."

A single Python script can drive 20 different 1,024 x 768 environments at 60 fps simultaneously. In the first Universe release, those environments include Atari 2600 games, Flash games and Browser tasks like searching for a flight. Since the agent uses a virtual mouse, keyboard and screen like a human, it can do anything we can on a computer, and the plan is to eventually run them through more complex games and tasks.

OpenAI has already received permission from EA, Microsoft Studios, Valve and other companies to freely access games like Wing Commander III, Portal and Rimworld for learning. It's reaching out to other companies, researchers and users, seeking "permission on your games, training agents across Universe tasks, (soon) integrating new games, or (soon) playing the games.

The DeepMind Lab repository will go live on GitHub later this week (look for the link here) and OpenAI's Universe is now available. Both companies say they want to keep their AI systems open, but reading between the lines, they have selfish reasons for doing so. AI has now progressed to the point that a lot more learning data is needed, so normally insular tech companies are now forced to collaborate with the outside world.