Instagram will notify you before it disables your account

But its new policy could allow it to remove more accounts.

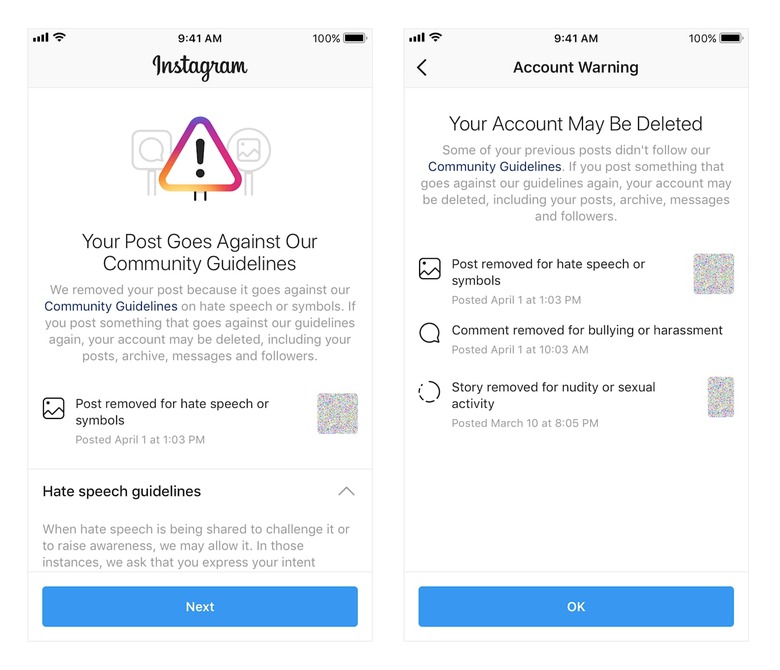

Instagram is making a few changes to the way it disables accounts. Currently, the platform removes accounts with a certain percentage of violating content. But it's rolling out a new policy that will also allow it to disable accounts with a certain number of violations in a given timeframe.

While that could mean more accounts get disabled, Instagram will notify users if they're at risk of being cut off. Users will be able to appeal content deleted for violations including nudity and pornography, bullying and harassment, hate speech, drug sales and counter-terrorism policies. And while users have long been able to appeal disabled accounts through the Help Center, they'll soon be able to make their appeals directly in the app.

According to Instagram, the measures are all about keeping the platform a safe and supportive place, and the policy changes are in line with its ongoing efforts to fight bullying. It recently gave users the ability to restrict problematic followers, and it's added features that filter out bullying comments and use machine learning to spot harassment in photos. Regardless of the platform, when it comes to keeping social media civil, it appears to be an uphill battle.