The concept restaurant of the future: iBeacons, motion detection and smartglass service (video)

An invitation to see a "future restaurant" covered in highfalutin tech concepts was shaping up to be a highlight of our week. According to Recruit Advanced Technology Lab's teaser, it was going to encompass smartglasses, augmented reality, gesture interfaces, customer face identification, avatars, seamless wireless payments and more, all hosted at Eggcellent, a Tokyo restaurant that... specializes in egg cuisine.

The demonstrations might not have reached the polished levels of the dreamy intro video, but the concept restaurant at least attempted to keep all of its demos grounded in reality. iBeacons through Bluetooth for food orders and payments, iPads that interacted with a conveyor-belt order projection, Wii Remotes that transform normal TVs into interactive ones and a Kinect sensor to upgrade Japan's maid café waitresses into goddesses — well, at least that's one idea.

The first demo involved Apple's iBeacon tech, increasing the precision of location detection from store-to-store level to individual tables. Each table was kitted out with a Bluetooth-enabled beacon, disguised in (egg-themed) porcelain, and bringing an iDevice in close booted up the menu app. From there, you can peruse the menu, see what friends have liked in the past (in this concept, the app would also be personalized from your social networks and phone book) and place your order. The app, through iBeacon, would also allow payments as soon as you're finished — just tap to the bill and slide to pay.

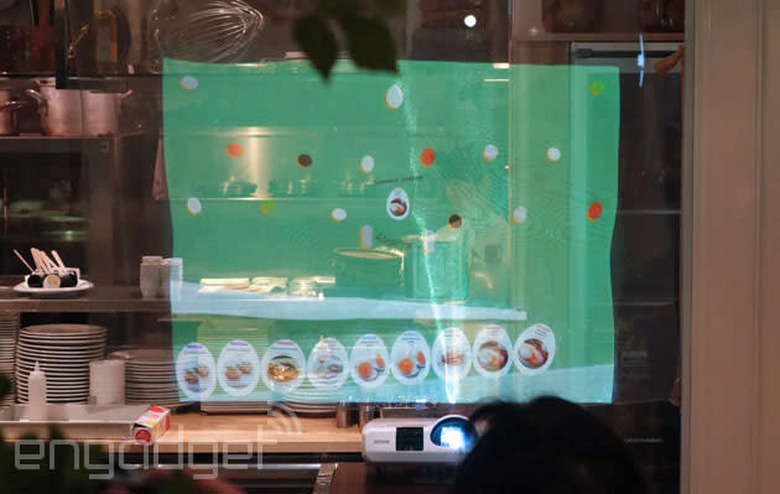

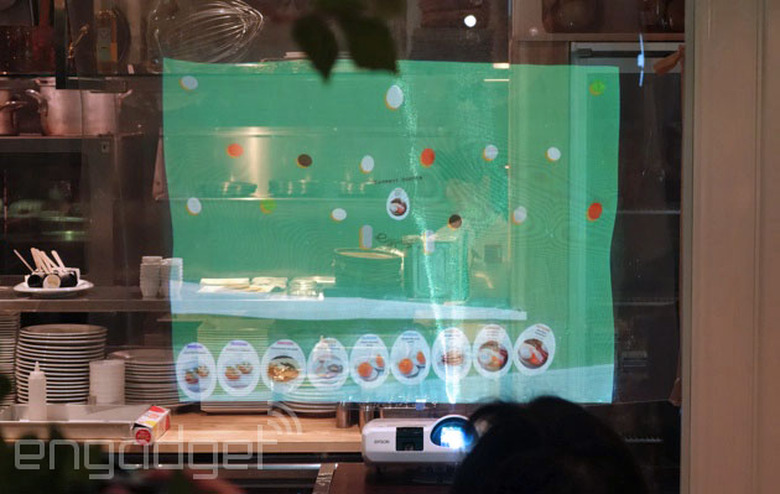

As ordering is digital, the restaurant can keep a running total of dishes ordered and project this on a nearby wall. A pachinko-esque screen (also beamed from a projector) keeps tabs on what's been ordered and whose dish is coming next. But there could be a problem: If everyone's ordering eggs Benedict (why wouldn't you be ordering eggs Benedict?), how can you tell whose is whose? Well, that's where the next demo comes in, with several iPads on hand for customizing your order. Once you've chosen what you like, you add stamps, a signature or a message, and then flick it off-screen and up to the projector. It then digitally shuffles down, and onto a conveyor belt behind the orders already taken.

Fun, but well, a little too safe, a little boring. No fear, here's the smartglasses part. Recruit ATL decided to go with a pair of Vuzix frames, and a system that'll be familiar to anyone that's used an augmented reality app through their smartphone camera. Once the glasses picked up the chef symbol, the food menu appears on the wearable; while hovering over the "call" button patches a call through to a member of the serving staff, who reply not in person, but through a video call on their tablet. The demo hits the same bumps in the road that we've seen on many real-use AR apps. If it's too dark, or you're looking at the wrong angle, the camera and software simply won't register the marker. The system might work fine in bright daytime cafes, but could become unusable in late-night bars. The face recognition posited in the teaser video unfortunately didn't surface, either.

To flag down a waitress or waiter at Hypothetical Bar 2022, you pray to the (projected) heavens.

In a room isolated from the rest of the restaurant, there's a blue sky projected onto the ceiling. This is the next concept, and the technology used is all pretty familiar. A projector, microphone and Kinect sensor do the impressive part, while a PC joins them all together. Rather than raise a hand (or your voice) in an effort to flag down a waitress or waiter at the Hypothetical Bar in 2022, your group prays to the (projected) heavens. Like this:

The Kinect sensor picks this up, and the clouds gather, revealing the "goddess" who'll take your order. While explaining how it worked, a spokesman said that this was really only a starting point, and that it could work for many more gestures, depending on the direction developers are looking to go — not to mention the fact that the Kinect is now a middleweight movement sensor these days. Even at this stage, the idea could certainly work in Akihabara's maid café scene.

Next, we thought we were seated at yet another AIO touch PC, another giant one, but dressed like a table. We move the candle, and the touchscreen picks that up and uses it like a cursor. Not this time, as this tech was far more charming... and low-fi. This screen was just a common TV set, laid flat. A PC was connected to it through HDMI, but all the input came from a Wii Remote, suspended a meter above the table. Through infrared, the Wii Remote (chosen because it's cheap) can detect that movement and scales it to the TV's 2D surface. Rest the candle on the menu circle, and said menu appears. Moving the candle then navigates through the choices and because it's not touchscreen, it otherwise behaves like a table — there's nothing else that's likely to be picked up by the IR sensor, unless you're smoking in a restaurant, you monster.

The team expects the event to open up more conversations — more ideas — about what augmented reality, motion capture and the rest can offer in the future.

Recruit Advanced Technology Lab itself pulled together these concepts into a slightly more joined-up video [below], but it remains exactly that: a concept. According to Recruit, the event was all about grasping beyond the existing tech field. The team expects the event to open up more conversations — more ideas — about what augmented reality, motion capture and the rest can offer in the future. What problems can it solve? What can this tech add?

Also, these working concepts are made from pretty cheap, preexisting tech, so what might developers and makers be capable of with pricier parts? Some of the ideas here are disarmingly superfluous, perhaps unnecessary, but there's definitely going to be more tablet-based food menus and augmented reality in the next few years — whether you ordered it or not.