csam

Latest

X plans to hire 100 content moderators to fill new Trust and Safety center in Austin

X head of business operations Joe Benarroch said the company plans to open a new office in Austin, Texas dedicated to content moderation, according to Bloomberg. The team will focus on stopping the spread of child sexual exploitation materials.

Researchers found child abuse material in the largest AI image generation dataset

A dataset used to train AI image generation tools such as Stable Diffusion has been pulled down after researchers confirmed the presence of CSAM among its 5 billion-plus images.

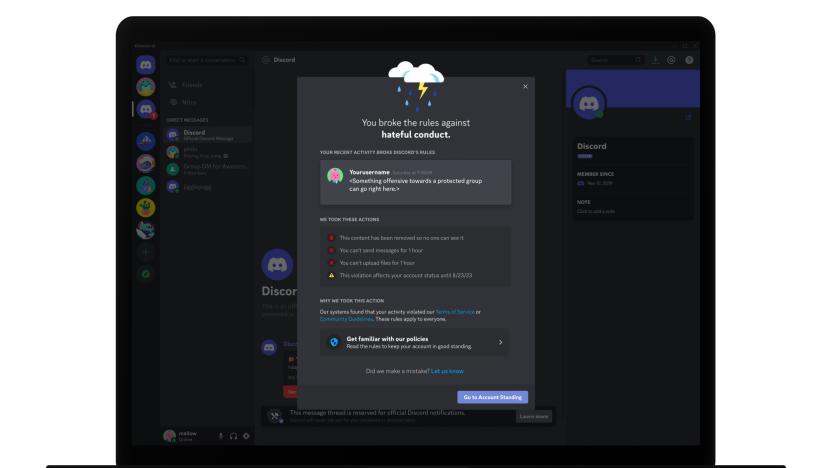

Discord’s latest teen safety blitz starts with content filters and automated warnings

Starting today, Discord is rolling out new automated safety alerts and content filters it claims will better protect teen users. The company’s Teen Safety Assist initiative comes after a recent report found at least 35 cases over six years where US prosecutors accused adults of allegedly using Discord to groom, kidnap and sexually assault children.

Australian regulators fine X for dodging questions about CSAM response

Australia has fined X (formerly Twitter) AUD 610,500 (around $387,000) for failing to fully comply with required questions about the platform’s handling of child exploitation material.

Attorneys General from all 50 states urge Congress to help fight AI-generated CSAM

The attorneys general from all 50 states have banned together and sent an open letter to Congress, asking for increased protective measures against AI-enhanced child sexual abuse images. The letter calls on lawmakers to “establish an expert commission to study the means and methods of AI that can be used to exploit children specifically.”

Mastodon's decentralized social network has a major CSAM problem

A study from Stanford shows that Mastodon has a big child sexual abuse (CSAM) problem.

Senators demand answers from Meta over how it handles CSAM on Instagram

A group of bipartisan senators are said to have asked Meta to explain Instagram's alleged failure to prevent child sexual abuse material (CSAM) from being shared among networks of pedophiles on the platform. Lawmakers from the Senate Judiciary Committee also want to know how Instagram's algorithms brought users who want to share such content together in the first place, according to The Wall Street Journal.

Discord (still) has a child safety issue

A new report has revealed alarming statistics about Discord's issues with child safety.

Meta vows to take action after report found Instagram’s algorithm promoted pedophilia content

Meta has set up an internal task force after reporters discovered a vast network of accounts dedicated to disseminating underage-sex content.

The EARN IT Act will be introduced to Congress for the third time

The controversial EARN IT Act, first introduced in 2020, is returning to Congress after failing twice to land on the president’s desk. Ostensibly intended to minimize the proliferation of Child Sexual Abuse Material (CSAM) throughout the web, detractors say it goes too far and risks further eroding online privacy protections.

Pinterest algorithms are making it easy for creeps to make boards featuring underage girls

An investigation has found that Pinterest's algorithms make it too easy for creeps to create boards featuring underage girls, although tools will soon help users fight back.

Facebook and Instagram will help prevent the spread of teens' intimate photos

Meta is clamping down on sextortion by letting people flag intimate photos.

Apple expands iCloud encryption as it backs away from controversial CSAM scanning plans

Apple is rolling out new security features that include encryption for most of your iCloud data — much to the chagrin of law enforcement.

Twitter has stopped enforcing its COVID-19 misinformation policy

Twitter says it has stopped enforcing its COVID-19 misinformation policy.

Twitter says it inadvertently ran ads on profiles containing CSAM

Twitter has revealed that it mistakenly ran ads on profiles dealing in CSAM.

Twitter planned to build an OnlyFans clone, but CSAM issues reportedly derailed the plan

Employees claimed the company isn't doing enough to tackle harmful sexual content, according to The Verge.

Apple removes mentions of controversial child abuse scanning from its site

Apple has pulled mentions of controversial CSAM scanning feature from its Child Safety website.

Apple is delaying its child safety features

Privacy advocates criticized the CSAM detection tools, which were supposed to arrive alongside the iOS 15 update.

Researchers say they built a CSAM detection system like Apple's and discovered flaws

Two Princeton University academics say they know the tool Apple built is open to abuse because they spent years developing almost precisely the same system.

Apple acknowledges 'confusion' over child safety updates

Apple's Craig Federighi has acknowledged there was "confusion" over the company's child safety updates, but believed the policy was right.