Facebook tests a feature that provides info on article publishers

It also links to related posts and shows how the article is being shared.

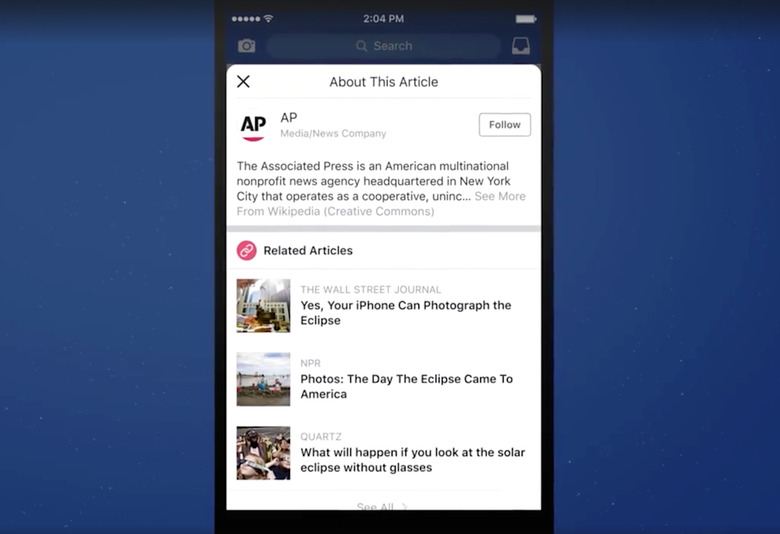

Facebook is still working out how to reduce the reach of fake news and misinformation on its site and today, it starts testing a new feature that sounds like it might be pretty useful. When an article link is shared in someone's News Feed, there will now be a small "i" button that will bring up additional information about the publisher and article when clicked. It will include information from the publisher's Wikipedia page, a link to follow its Facebook Page, Trending and Related articles about the same topic and a graphic on where and how the article is being shared across Facebook. When any of that information isn't available, Facebook will say that explicitly. That in itself is pretty useful. For example, if there's no Wikipedia page for the publisher of the piece, it could mean it's not a reputable outlet.

After coming to terms with the fact that its platform contributed to the spread of fake news during last year's presidential election, Facebook has implemented a few features and regulations aimed at combating the problem. It trained its algorithms to deprioritize fake news and clickbait as well as articles shared by individuals who post at extremely high frequencies. It cut off fake news sites' ad revenue and blocked advertisements created by Pages that share misinformation. Facebook also began introducing fact checked articles and compiling related articles that cover a Trending topic. Some of Facebook's efforts appear to be having a positive effect — the site deleted tens of thousands of fake accounts ahead of the recent German election. But there have also been some missteps. Facebook spread quite a bit of fake news following this week's massacre in Las Vegas.

Facebook says this information button test is just getting started. "We'll continue to listen to people's feedback and work with publishers to provide people easy access to the contextual information that helps people decide which stories to read, share, and trust, and to improve the experiences people have on Facebook," it said in a statement.

[embed=https://www.facebook.com/plugins/video.php?href=https%3A%2F%2Fwww.facebook.com%2Ffacebook%2Fvideos%2F10156559267856729%2F&width=640&show_text=false&height=343&appId]