ImageRecognition

Latest

AR pioneer Blippar is going out of business

Pioneering augmented reality startup Blippar, once touted as a $1.5 billion British tech unicorn, has stumbled into administration. The company said that it failed to secure the funding it needed to reach profitability following a dispute with one of its shareholders. Corporate insolvency firm David Rubin & Partners announced on Monday that it was appointed as Blippar's administrators by a UK court.

Bing can use your phone camera to search the web

Microsoft isn't about to let Google's visual search features go uncontested. The tech giant has introduced a Visual Search feature to Bing that uses your phone's camera (either a fresh shot or from your camera roll) to identify objects and serve up links related to what you see. Snap a picture of a landmark and you may get travel info, for instance. Logically, Microsoft is also playing up the shopping angle: search for an outfit or home furniture and you'll get prices and shopping locations.

Facebook trained image recognition AI with billions of Instagram pics

Training deep learning models to recognize images, as well as objects within those images, takes quite a bit of effort. Often, each training image has to be labeled by humans and when you're using millions of images, that process becomes rather labor-intensive. Scaling up to billions of images becomes nearly impossible. So, Facebook has been working on a way to train deep learning models with limited human supervision. Instead, its researchers have turned to public images that are, in a way, already labeled -- with hashtags.

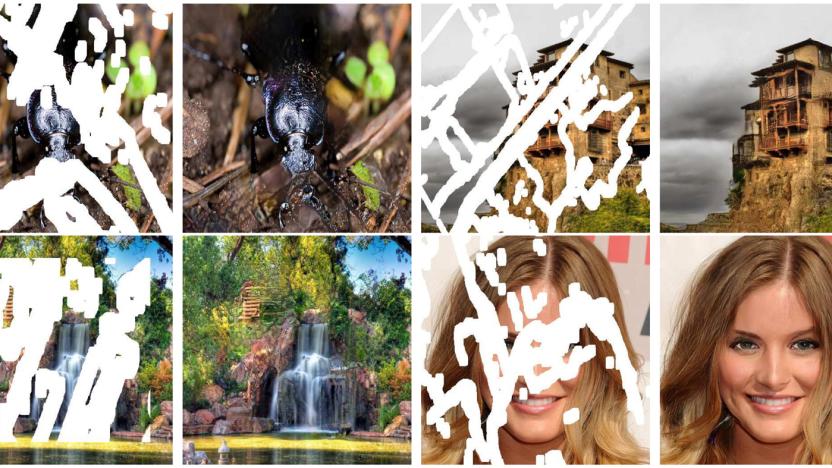

NVIDIA's AI fixes photos by recognizing what's missing

Most image editing tools aren't terribly bright when you ask them to fix a photo. They'll borrow content from adjacent pixels (such as Adobe's recently demonstrated context-aware AI fill), but they can't determine what should have been there -- and that's no good if you're trying to restore a decades-old photo where you know what's absent. NVIDIA might have a solution. It developed a deep learning system that restores photos by determining what should be present in blank or corrupted spaces. If there's a missing eye in a portrait, for instance, it knows to insert one even if the eye area is largely obscured.

Google Lens visual search rolls out on iOS

After making a slow march across Android devices, Google's AI-powered visual search is coming to iOS. Apple device owners should see a preview of Google Lens pop up in the latest version of their Google Photos app over the next week. In case you've forgotten how it works, the idea is that your camera will recognize items in a picture and be able to take action with tie-ins to Google Assistant. Of course, now that you can use the technology the question is whether or not you should.

ARM's latest processors are designed for mobile AI

ARM isn't content to offer processor designs that are kinda-sorta ready for AI. The company has unveiled Project Trillium, a combination of hardware and software ingredients designed explicitly to speed up AI-related technologies like machine learning and neural networks. The highlights, as usual, are the chips: ARM ML promises to be far more efficient for machine learning than a regular CPU or graphics chip, with two to four times the real-world throughput. ARM OD, meanwhile, is all about object detection. It can spot "virtually unlimited" subjects in real time at 1080p and 60 frames per second, and focuses on people in particular -- on top of recognizing faces, it can detect facing, poses and gestures.

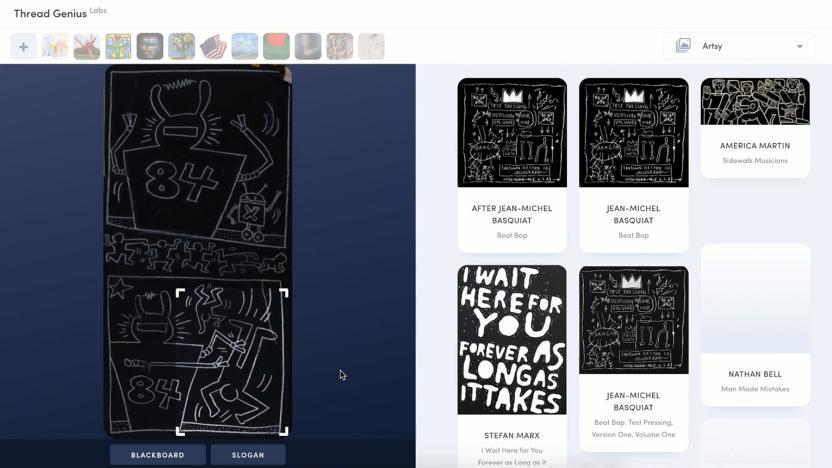

Sotheby's wants AI to find your next art purchase

Most folks don't know much about art, but do know what they like. Auction firm Sotheby's has embraced that idea with its acquisition of Thread Genius, a company that uses AI to find art based on images of paintings, watches, furniture and other items. Sotheby's said it will marry the tech with data it already stores to help clients find objects that match their taste and budgets (terms of the sale weren't disclosed).

Google tool lets you train AI without writing code

In many ways, the biggest challenge in widening the adoption of AI isn't making it better -- it's making the tech accessible to more companies. You typically need at least some programming to train a machine learning system, which rules it out for companies that can't justify a data scientist for the task. Google may have a solution: it just unveiled an alpha release of Cloud AutoML Vision, its first in a set of tools that trains AI without requiring code. This first service trains image recognition systems using a drag-and-drop interface -- you just have to load photos, tag them and start the training process.

Facebook's revenge porn prevention test has users upload photos

The Australian government and Facebook have teamed up in the fight against revenge porn. As the Australian Broadcasting Corporation reports, alongside Australia's Office of the eSafety Commissioner, Facebook has launched a pilot program aimed at not just curtailing the spread of revenge porn once it begins, but preventing it altogether.

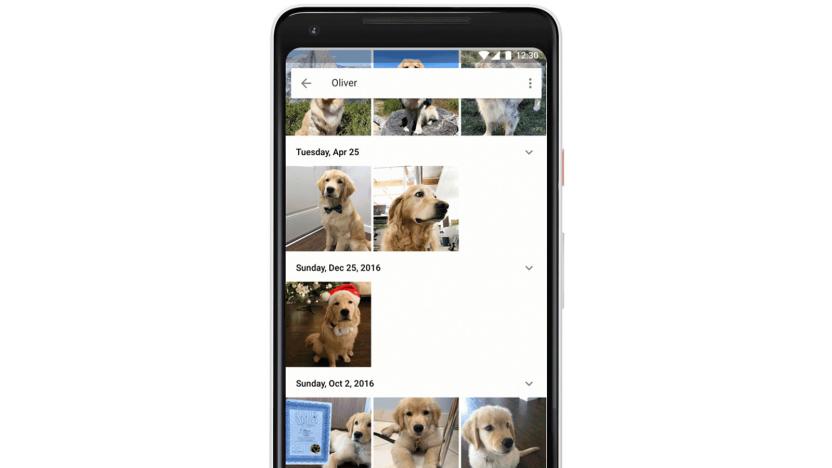

Google Photos can pick your pet out of a furry lineup

Google Photos has long been adept at recognizing animals in a generic sense. But let's be honest: the real reason you're digging through photos is to find shots of your specific pets when they were little balls of fur. Accordingly, Google has made those pet searches much easier. Photos is now smart enough to recognize individual and dogs, placing their shots alongside people. You can name pets, too, so you can look for Chairman Meow or Rover instead of typing in generic "cat" and "dog" queries.

Samsung is the latest tech titan to open an AI lab in Canada

If it wasn't already clear that Canada is becoming a hotbed for AI research, it is now: Samsung has opened an AI lab (shown below) at the Université de Montréal. The school's faculty and students (including long-time Samsung partner Prof. Yoshua Bengio) will collaborate with South Korean researchers on a slew of AI-related projects, including self-driving car technology, image recognition, translation and robots. While you may not see the first fruits of this lab for years, it underscores both Samsung's increasing dependence on AI and the tech industry's rapid shift to the north.

Fujifilm's X-E3 overhauled with 4K video and a touchscreen

Fujifilm fans have waited a long time for an X-E2 replacement, but it appears to have been worth it. The X-E3 rangefinder-style mirrorless has arrived with thoroughly modern features and is now Fujifilm's smallest viewfinder-equipped camera. It got a much needed bump to the 24.3-megapixel X-Trans III sensor used on other X-models, 4K video, a touchscreen, Bluetooth LE and more. For $900, it will give potential buyers of Sony's A6300 or the Canon EOS M5 something to ponder.

Google's new Street View cameras help AI map the real world

Google's Street View cameras haven't changed significantly in 8 years, and that's a problem when the technology world most certainly has. How is the company supposed to fulfill its AI ambitions with 2009-era hardware? Thankfully, it won't have to. Google has revealed to Wired that it's implementing a brand new camera design that should not only produce higher quality Street View imagery, but will prove crucial to Google's use of AI to index real-world locations.

Drones will watch Australian beaches for sharks with AI help

Humans aren't particularly good at spotting sharks using aerial data. At best, they'll accurately pinpoint sharks 30 percent of the time -- not very helpful for swimmers worried about stepping into the water. Australia, however, is about to get a more reliable way of spotting these undersea predators. As of September, Little Ripper drones will monitor some Australian beaches for signs of sharks, and pass along their imagery to an AI system that can identify sharks in real-time with 90 percent accuracy. Humans will still run the software (someone has to verify the results), but this highly automated system could be quick and reliable enough to save lives.

You can confuse self-driving cars by altering street signs

While car makers and regulators are mostly worried about the possibility of self-driving car hacks, University of Washington researchers are concerned about a more practical threat: defacing street signs. They've learned that it's relatively easy to throw off an autonomous vehicle's image recognition system by strategically using stickers to alter street signs. If attackers know how a car classifies the objects it sees (such as target photos of signs), they can generate stickers that can trick the car into believing a sign really means something else. For instance, the "love/hate" graphics above made a computer vision algorithm believe a stop sign was really a speed limit notice.

Police body cams will soon use AI to find missing people

Motorola Solutions -- not to be confused with smartphone maker Motorola -- is adding machine learning to its surveillance equipment used by law enforcement personnel. Cops in Chicago's Waukegan police department are already suiting up with the company's Si500 body cams. But those same cameras could soon pack AI that could help officers identify objects and missing people. A prototype device is in the works with Neurala, a deep learning startup that recently integrated its software with drones to track poachers in Africa.

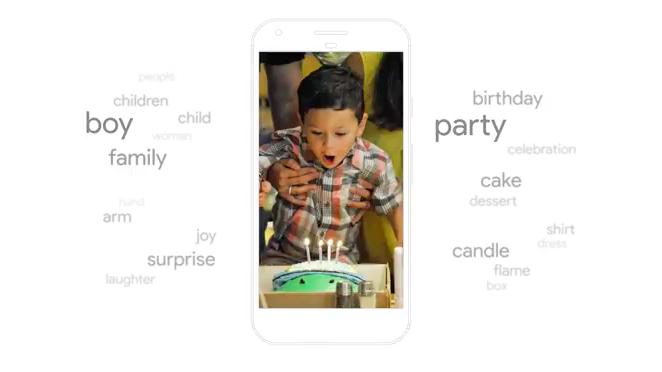

Google wants to speed up image recognition in mobile apps

Cloud-based AI is so last year, because now the major push from companies like chip-designer ARM, Facebook and Apple is to fit deep learning onto your smartphone. Google wants to spread the deep learning to more developers, so it has unveiled a mobile AI vision model called MobileNets. The idea is to enable low-powered image recognition that's already trained, so that developers can add imaging features without using slow, data-hungry and potentially intrusive cloud computing.

Zepp phone apps use AI to study your basketball shots

You may know Zepp for sports tracking sensors you can slap on your baseball bat or soccer ball, but its latest tracking involves little more than your phone and a good view of the action. Its game recording and training apps (Android, iOS) are adding a dash of AI technology (namely, computer vision) to analyze your baseball swings, golf swings and basketball shots. If your three-pointer throwing needs work, you just need to point your phone's camera at the court and start capturing. You can share the videos and performance data with others, too, in case you need to prove your skills to recruiters.

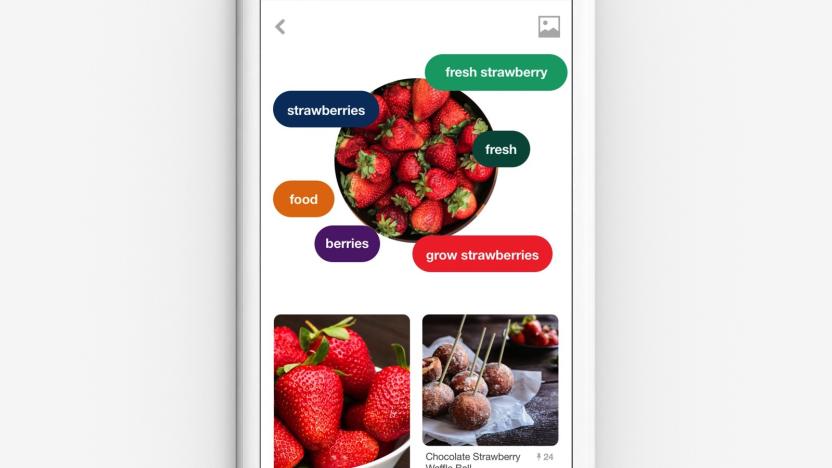

Pinterest Lens finds recipes based on your weekend brunch pics

Pinterest announced its image recognition tool back in February, but the company has already added a number of improvements since then. Today, the company is revealing the latest addition to Lens: full dish recognition. This means that when you snap a pic of your plate with the Pinterest app, the software will find full recipes for complete dishes rather than just options based on single ingredients. This update to Lens isn't all the company is doing for aspiring cooks though.

Google Lens resurfaces questions about AI and human identity

Today at the company's annual developer conference, Google CEO Sundar Pichai uttered a phrase that will no doubt be repeated in corporate boardrooms across the world for the foreseeable future: "AI first." It wasn't the first we've heard of the formerly "mobile-first" company's focus on artificial intelligence, but Google I/O 2017 marked the first time we saw many of the tools that will back up that new catchphrase.