Researchers develop method for real-time speech animation

Audio can be incorporated automatically without time-consuming manual animation.

Researchers at the University of East Anglia, Caltech, Carnegie Mellon University and Disney have created a way to animate speech in real-time. With their method, rather than having skilled animators manually match an animated character's mouth to recorded speech, new dialogue can be incorporated automatically in much less time with a lot less effort.

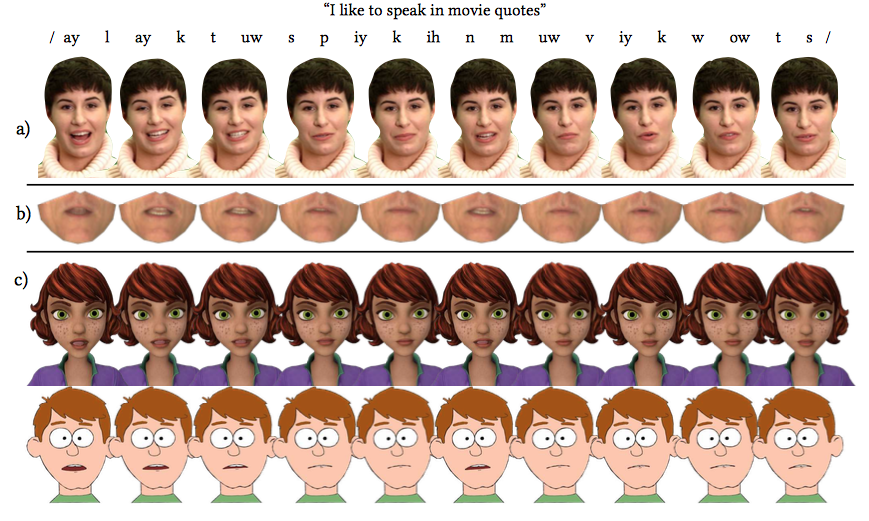

To do this, the researchers recorded over eight hours of audio and video of a speaker reciting more than 2500 different sentences. The speaker's face was tracked as they spoke, which was used to create a reference face for an animation model. Off-the-shelf speech recognition software was then used to transcribe the speech sounds. All of this information was subsequently used to train a neural network to animate a reference face, frame-by-frame, based on phonemes -- or individual distinct bits of sound -- pulled from new audio. That reference face was then superimposed on and matched to computer generated characters in real-time.

Training the AI with the reference video and audio takes only a couple of hours and this method lets you use speech from any speaker with any accent and even in different languages. It also accommodates singing. "Realistic speech animation is essential for effective character animation. Done badly, it can be distracting and lead to a box office flop," said lead researcher Sarah Taylor in a statement. "Doing it well however is both time consuming and costly as it has to be manually produced by a skilled animator. Our goal is to automatically generate production-quality animated speech for any style of character, given only audio speech as an input."

This new method was recently published in ACM Transactions on Graphics and presented at SIGGRAPH 2017. You can check out a video of the method at work through the study's supplementary material download here.