neuralnetwork

Latest

Astronomers use AI to reduce analysis time from months to seconds

Gravitational lensing is when the image of a distant object in space -- like a galaxy, for example -- is distorted and multiplied by the gravity of a massive object, such as a galaxy cluster, lying in front of the smaller, faraway object. It's a useful phenomenon that has helped scientists discover exoplanets, understand galaxy evolution, spot a super bright galaxy, detect black holes and prove Einstein right. But analyzing images affected by gravitational lensing takes a really long time, requiring researchers to compare real images with simulated ones. Just one lensing effect can take weeks or months to analyze.

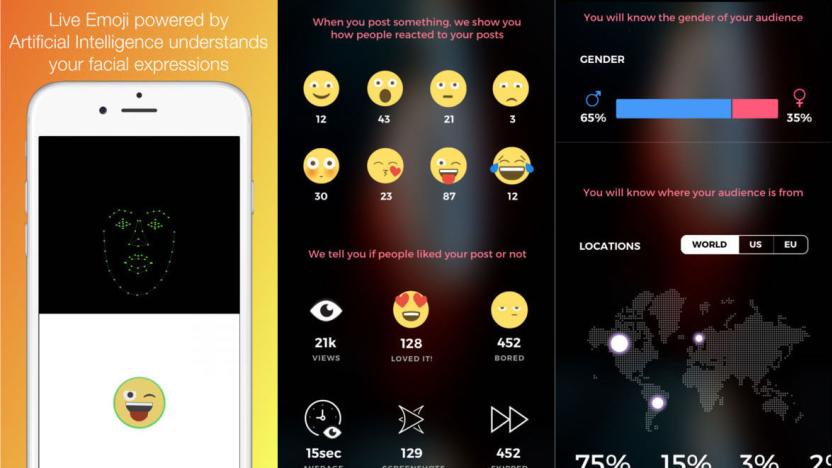

Polygram is a new social network powered by facial recognition

There's a new social network in town and it's packed with some pretty smart and savvy features. Polygram's main contribution to the hard-to-break-into social media world is its ability to detect facial expressions, allowing users to to respond to messages with an emoji based on their actual expression. And rather than just tallying likes or a selection of reactions that viewers have to choose between and click, Polygram allows users to see the face-based emotional response of those that viewed their post.

Disney Research taught AI how to judge short stories

Disney researchers have been coming up with some striking new technology lately, including a method for real-time speech animation, shared augmented reality and some creepy face-projection tech for live performances. Now, researchers at Disney and the University of Massachusetts Boston have been working on neural networks that can evaluate short stories. While these AIs don't (yet) analyze story like a professional literary critic, the software tries to predict which stories will be most popular. "Our neural networks had some success in predicting the popularity of stories," said Disney Research scientist Boyang "Albert" Li in a statement. "You can't yet use them to pick out winners for your local writing competition, but they can be used to guide future research."

Researchers use AI to monitor hospital staff hygiene

Hospital-acquired infections are a pesky problem and around one in 25 hospital patients have at least one healthcare-associated illness at any given time. To combat this issue, a research team based at Stanford University turned to depth cameras and computer vision to observe activity on hospital wards -- a system that could be used to track hygienic practices of hospital staff and visitors in order to spot behaviors that might contribute to the spread of infection. The work is being presented at the Machine Learning in Healthcare Conference later this week.

An AI ‘nose’ can remember different scents

Russian researchers are using deep learning neural networks to sniff out potential scent-based threats. The technique is a bit dense (as anything with neural nets tends to be), but the gist is that the electronic "nose" can remember new smells and recognize them after the fact.

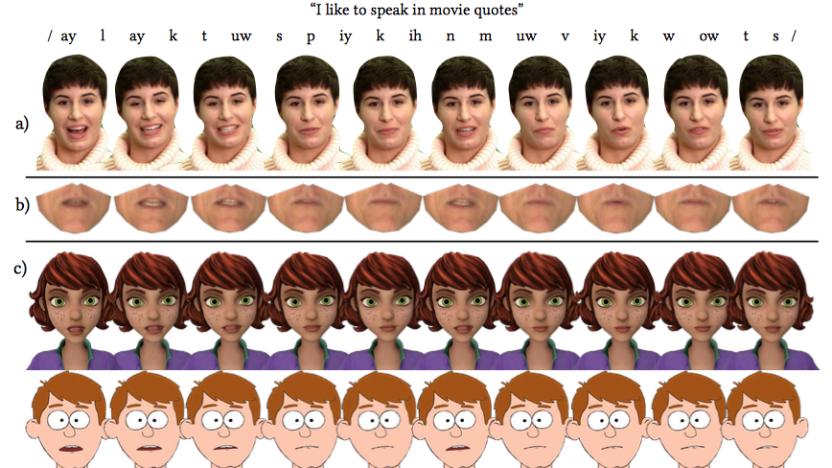

Researchers develop method for real-time speech animation

Researchers at the University of East Anglia, Caltech, Carnegie Mellon University and Disney have created a way to animate speech in real-time. With their method, rather than having skilled animators manually match an animated character's mouth to recorded speech, new dialogue can be incorporated automatically in much less time with a lot less effort.

Your next craft beer might be named by a neural network

Researcher Janelle Shane has used AI to come up with names for paint colors, metal bands and guinea pigs in the past. She's even used it to generate wonderfully weird pickup lines. Now, she's turned her AI naming capabilities towards beer.

Facebook translations are now entirely powered by AI

Facebook has been working on changing how it translates text in posts and comments and today it announced that its transition is complete. It means that translations should be quite a bit more accurate going forward.

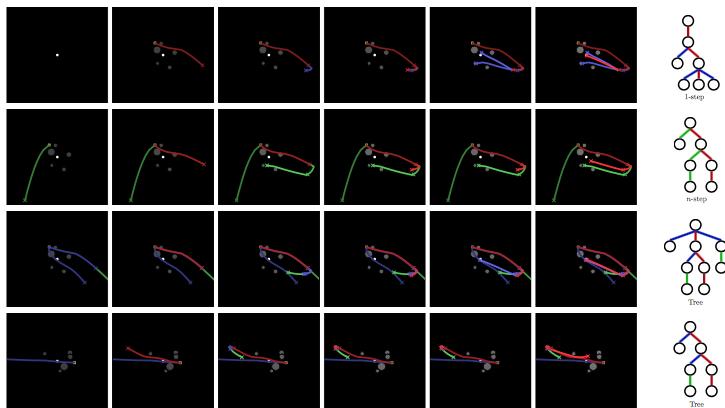

DeepMind researchers create AI with an ‘imagination’

Being able to reason through potential future events is something humans are pretty good at doing, but that kind of ability is a real challenge when it comes to training AI. Taking those reasoning skills and using them to create a plan is even more difficult, but the Google DeepMind team has begun to tackle this problem. In a recent blog post, researchers describe new approaches they've developed for introducing "imagination-based planning" to AI.

MIT's AI knows what's in your cookies just by looking at them

Imagine an app that can help you figure out how to replicate what you're eating in a restaurant and help track your calorie intake just by taking a picture of your plate. A team of MIT CSAIL researchers have developed an artificial intelligence system that has the potential to evolve into that kind of application. They call the AI Pic2Recipe, because it can predict the ingredients and recipe used to make a dish from a single snapshot. If that sounds familia, it's probably because of "See Food," the fictional app that made an appearance in HBO's Silicon Valley, and a new-ish Pinterest feature that recognizes the most prominent ingredients in a picture of food.

High-tech solutions top the list in the fight against eye disease

"The eyes are the window to the soul," the adage goes, but these days our eyes could be better compared to our ethernet connection to the world. According to a 2006 study conducted by the University of Pennsylvania, the human retina is capable of transmitting 10 million bits of information per second. But for as potent as our visual capabilities are, there's a whole lot that can go wrong with the human eye. Cataracts, glaucoma and age-related macular degeneration (AMD) are three of the leading causes of blindness the world over. Though we may not have robotic ocular prosthetics just yet, a number of recent ophthalmological advancements will help keep the blinds over those windows from being lowered.

Researchers make a surprisingly smooth artificial video of Obama

Translating audio into realistic looking video of a person speaking is quite a challenge. Often, the resulting video just looks off -- a problem called the uncanny valley, which states that human replicas appearing almost but not quite real come off as eerie or creepy. However, researchers at the University of Washington have made some serious headway in overcoming this issue and they did it using audio and video of Barack Obama.

Scientists made an AI that can read minds

Whether it's using AI to help organize a Lego collection or relying on an algorithm to protect our cities, deep learning neural networks seemingly become more impressive and complex each day. Now, however, some scientists are pushing the capabilities of these algorithms to a whole new level - they're trying to use them to read minds.

Artistic AI paints portraits of people who aren't really there

Mike Tyka paints the portraits of people who don't exist. The subjects of his ephemeral artwork are not born from any brush. Rather, they are sculpted -- roughly -- from the digital imagination of his computer's neural network.

Sorting Lego sucks, so here’s an AI that does it for you

Neural networks are currently being tasked with everything from adding animations to video games to reproducing images taken from MRI scans. Training the AI, which needs to be fed vast amounts of data, can be a slog and even then it may not produce completely accurate results. But when it comes to recognizing and classifying images and objects the AI can cut out a lot of leg work, as Jaques Mattheij found out when he built his own neural network for the novel task of sorting through his massive Lego collection.

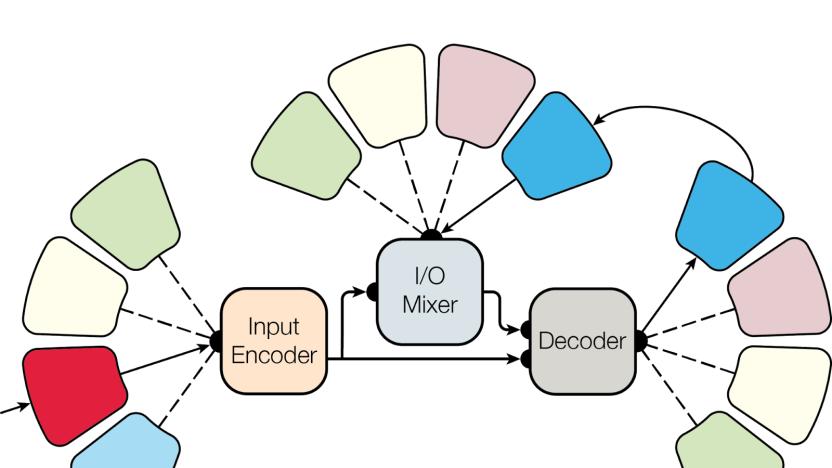

Google's neural network is a multi-tasking pro

Neural networks have been trained to complete a number of different tasks including generating pickup lines, adding animation to video games, and guiding robots to grab objects. But for the most part, these systems are limited to doing one task really well. Trying to train a neural network to do an additional task usually makes it much worse at its first.

Baidu’s text-to-speech system mimics a variety of accents ‘perfectly'

Chinese tech giant Baidu's text-to-speech system, Deep Voice, is making a lot of progress toward sounding more human. The latest news about the tech are audio samples showcasing its ability to accurately portray differences in regional accents. The company says that the new version, aptly named Deep Voice 2, has been able to "learn from hundreds of unique voices from less than a half an hour of data per speaker, while achieving high audio quality." That's compared to the 20 hours hours of training it took to get similar results from the previous iteration, for a single voice, further pushing its efficiency past Google's WaveNet in a few months time.

Robot uses machine-learning to grab objects on the first try

Training robots how to grasp various objects without dropping them usually requires a lot of practice. But a new robot, designed by researchers at UC Berkeley and Siemens and described in an upcoming paper, can learn how to grip new objects just by studying a database of 3D shapes.

UC Berkeley researchers teach computers to be curious

When you played through Super Mario Bros. or Doom for the very first time, chances are you didn't try to speedrun the entire game but instead started exploring -- this despite not really knowing what to expect around the next corner. It's that same sense of curiosity, the desire to screw around in a digital landscape just to see what happens, that a team of researchers at UC Berkeley have imparted into their computer algorithm. And it could drastically advance the field of artificial intelligence.

'Gray Pubic' is proof even AI can't get paint names right

When the robots take over your job, remember this: you can try naming paints for a living. Research scientist and neural network enthusiast Janelle Shane experimented with the idea of using AI to name new paints. After all, we keep coming up with new shades, and professional paint naming doesn't exactly sound lucrative. As Shane learned, though, it's not easy training a neural network to conjure up names that are both creative and appropriate.