Engadget at 15: A look at how much tech has changed

Here are 15 things you couldn't do 15 years ago, when Engadget was born.

A lot has changed since Engadget was born, both in the gadgets we use and what we do with them on a regular basis. When the site started in 2004, fitness trackers, voice assistants and electric cars were the stuff of fiction. Now most of these are commonplace, so much so that we put our trust in them on a daily basis. To celebrate Engadget's 15th birthday, here are 15 things that didn't exist 15 years ago.

Hold the world in your hand

In the past, mobile phones could do mostly two things: make calls and send (and receive) text messages. If you had a fancier model, you could also play a little game. Features were added over the years, including music playback and photography. Smartphones like the BlackBerry and the Palm Treo that combined the functionality of PDAs and phones eventually came about, but they were mostly designed for business users.

The iPhone, which debuted in 2007, changed the landscape. It popularized the notion of an all-touchscreen phone (even though similar handsets like the LG Prada preceded it), did away with the concept of a stylus and made it a lot easier to download and install apps. Importantly, the iPhone was also a catalyst for smartphones being a tool for everyday consumers instead of just executives with an expense account.

Since then, the smartphone has become a near-ubiquitous computing platform. For many, it's the sole means of communicating with the world. It's capable of connecting you with the sum total of human knowledge as well as letting you create, work and play. These tiny, handheld pieces of tech are TVs, HiFis and game consoles, even running zeitgeisty games like Fortnite.

Thanks to NFC payments, you can buy stuff without taking out your wallet, and thanks to map apps, you can use your phone to get around the city. With social media, it's possible to start a revolution from your phone, show the world your genius (or madness) and connect to everyone around you.

Capture everything

The best camera, so the saying goes, is the one you have with you. As smartphones have become ubiquitous, so too has the access to easy-to-use cameras. It wasn't long before these tiny smartphone lenses were being used in place of other, low-cost point-and-shoots. As the phones got more popular and more expensive, the cameras got better, to the point where they became the choice of many professionals worldwide.

Smartphone cameras may not be as good as DSLRs, but they are more than enough for the casual photographer. The year 2015 was a watershed, as Flickr revealed that the most popular camera maker on its site was Apple. Since then, low- and mid-range camera sales have plummeted as casual and amateur photographers place their trust in their phones. Pretty much wherever we go, we can capture what's going on in stunning detail and clarity.

Most phones have two sets of cameras: one on the back, for photography, and one up front, for pictures of your face. There have always been selfies, of course; it's just that a front-facing camera made them a lot easier. The selfie is so ingrained these days that it's even a proper word in a proper dictionary. Of course, this isn't without its side effects either, including body dysmorphia and the dreaded dick pic. And that anyone can take your picture, whether you consent to it or not.

Effortlessly track your movements

It's odd to think that the first devices in the quantified-self movement weren't worn on the wrist. The first products in this category were Nike's sneaker-worn running sensor for the iPod and Fitbit's belt-worn pedometers. It wasn't until 2012 that a number of projects, including the first Nike+ FuelBand and Fitbit Flex, tried to conquer our wrists.

Soon after, Pebble started showing off its vision for a smart watch that could engage with your smartphone. The original device could show notifications from your emails or text messages and could run basic apps. And yet it would be a matter of months between Pebble's arrival and Samsung showing off the first in its Galaxy Gear range of smartwatches. In 2015, Apple would join the party with its own timepiece too.

Since 2013, smartwatches have gotten smaller, slightly better looking and a whole lot more popular. This year, one in six American adults wears these devices, which are now tiny, wrist-worn computers. As well as connecting with your smartphone and tracking your movement, some can make calls without a phone, and some can even save your life.

Maps on your phone

In the olden days of yore, people used to use paper maps to find their way in unfamiliar territory. Then somewhere along the way, GPS came along and you could buy a handy GPS navigation unit for your car. There were even handheld models you could use for hiking or geocaching. And then the internet arrived, and lo did the navigation angels sing, as MapQuest and other online maps guided the lost and adrift to their destinations -- as long as you could remember to print out the directions beforehand anyway.

It wasn't until online maps -- be they Google Maps or Apple Maps -- arrived on the phone around 2008 that our lives truly changed for the better. No longer did you have to get a dedicated GPS device or pay for updated maps every year or so. Plus, these map apps not only told you where to go and how to get there but also gave you real-time traffic information, public transit directions (and how much the trip would cost) and even recommendations for nearby restaurants and shops. Google Maps even has StreetView, which lets you see panoramic images of what your destination looks like. Plus, these online maps work for nearly every country you're in, which is a boon for travelers everywhere.

Step into another world

Virtual reality has ridden the hype train more than once, and every time it's ended unpleasantly. Anyone who remembers Virtuality or the Forte VFX 1 will know how poor commercial VR has been. In 2013, VR's renaissance began, as Oculus VR began shipping its first development kits to initial Kickstarter backers.

Those initial headsets quickly developed a passionate fan base as people started to believe that this time, VR had arrived. A bandwagon formed, and other companies began producing their own VR platforms. Facebook paid $2 billion to own Oculus, which, it believed at the time, was the next great step in computing.

Others joined in, including Sony, HTC/Valve and Microsoft, all with their own spins on a VR headset that hooked up to a PC or console. Samsung and Google took a different angle, using smartphones and crude visors to make lightweight, and affordable, options. Now people had a whole host of ways to step into the virtual world and experience something new.

And then there's the advent of augmented reality, where people can use head-mounted goggles, or their smartphone, to project digital information onto the real world. It's in its infancy today, but give it another five years and we may all be living in a quasi-virtual space. Or that hype train will have nose-dived off a bridge and we'll all be waiting for the next big thing to roll around.

See, listen and play everything in seconds

The internet has made a lot of things shorter, like the distance you need to travel to rent a new movie or buy a new CD. Before, you couldn't do either of those with the push of a button. But now we don't even need to buy these things, because streaming services exist. Where once we'd be limited by the amount of money we had, now we can pay a monthly fee to have "everything."

Even the high-price world of games has its own Netflix-esque version in the form of services like Xbox Game Pass. For $10 per month, you can get access to more than 100 titles, ranging from older games to brand-new releases. Similarly, Spotify enables you to play upward of 30 million songs for a $9.99 monthly fee.

This business model has been the most successful in the video market, where Netflix, Amazon Prime and Hulu dominate. A small, monthly fee brings you thousands of hours of content, both movies and TV shows from a variety of studios. These outfits are now so big and so powerful that they're winning Oscars and making billions in profits every year.

Connect to everyone

Social media existed before Engadget was born -- Friendster started in 2002 and MySpace in 2003 -- but it wasn't until the past decade that it swallowed the world. Facebook started out as a colleges-only platform in 2004 and opened to everyone else in 2006. Since then, the site has become a major source of news and (mis)information as well as a central hub to our lives. It was a place to keep in touch with family and friends, learn of important events, and, of course, get reminders of your friends' birthdays.

Twitter, which launched a few months before Facebook opened its doors to the public, fulfilled a similar desire to be informed. The platform's river of short, easily shareable messages became a way of keeping tabs on what was going on in a much more immediate way. Twitter also allowed politicians and celebrities to control their own media message, and fans could interact with their idols directly too. More than that, Facebook and Twitter were also instrumental in helping people make new connections with like-minded folks, build communities and even meet potential romantic interests.

The power of social media became known to all during the Arab Spring, when protestors used the platforms to protest oppressive regimes in the Middle East. Social media platforms were an alternative to state-funded media and proved useful as a way for citizens to spread information to their colleagues. We believed at the time that it was a force for good, helping everyone embrace democracy and transparency.

In later years, however, we saw social media's ugly side, as studies cited that the service was causing depression, dysmorphia and loneliness. Users are often fooled into thinking that everyone else's lives are better or cooler than their own, especially on photo-centric networks like Instagram and Snapchat. It's also easy to bully and harass vulnerable people, with sometimes tragic consequences.

There are also the downsides of what's being described as surveillance capitalism, as sharing our innermost thoughts comes with a price -- specifically that what we tell our friends, our interests and our photos can be monetized and packaged for the highest bidder. What we gained in connections around the world, we lost in individual privacy.

And as we've learned in the past few years, the systems were also easily gamed by those who wanted to wreck our democracy. Social media has gone from a way to reach out to friends across the world to a way of spreading disinformation and sowing discord. Its aims may not be bad, but more effort needs to be made by those in charge to keep people safer.

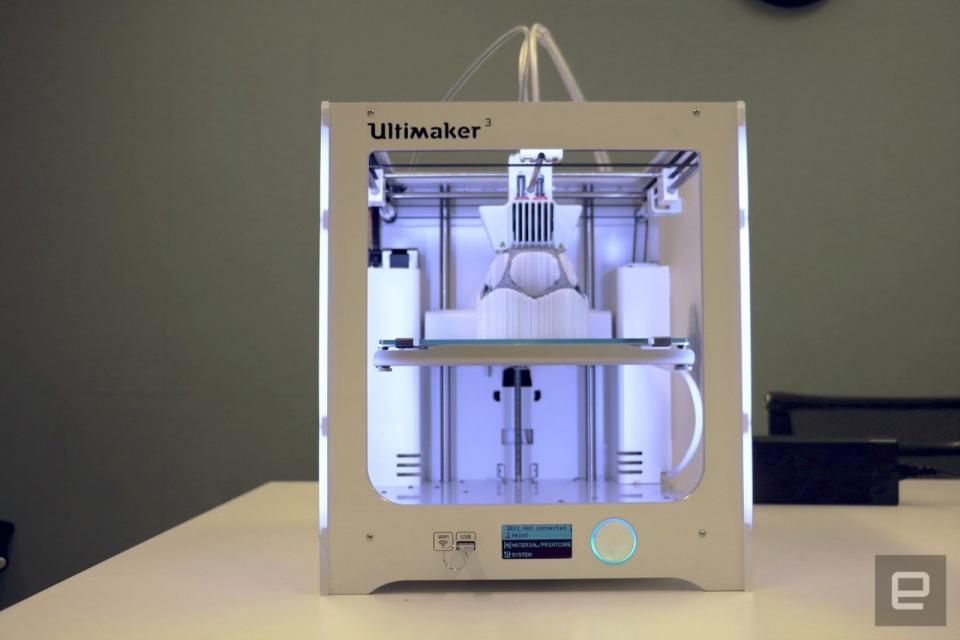

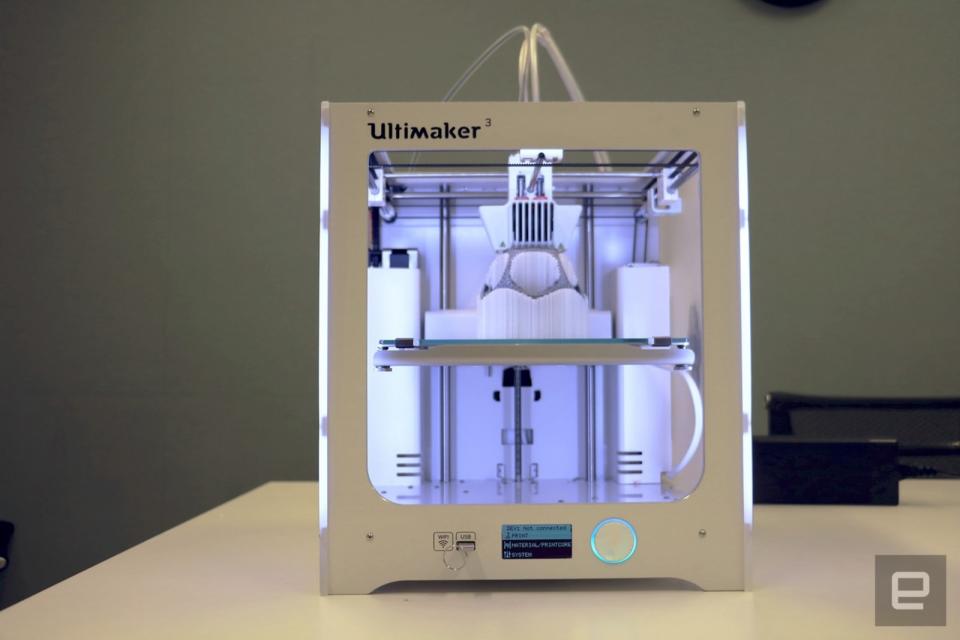

Create anything (as long as it is small and plastic)

It wasn't the first 3D printer or the first company to sell its wares, but MakerBot's arrival still feels like a watershed moment. In 2009, the company began selling relatively affordable devices to people to create their own CNC-based, rapid-prototyping machines. And it wouldn't be long before a huge number of companies, from Formlabs (2011) to 3Doodler (2012) started building and selling their own versions.

More than anything else, it was the promise of what home 3D printing could become that was so enticing: a tiny factory in your own home that could concoct whatever you needed, whenever you needed it. There was a reason that MakerBot called its flagship device the Replicator in homage to Star Trek: The Next Generation.

But while 3D printing hasn't yet fulfilled its potential, it has become a vital tool for creators and hackers. The technology is used to help surgeons practice for tricky operations on models of the patient. It's used to help lower the cost of dentures for prisoners, and it even helps companies create customized products, like Gillette's branded razors.

We're not at the point where everybody needs a 3D printer, but it's certainly a technology that will continue to quietly evolve. At least until we get real replicators.

Lean back

Way back when, we had two options for computing: You could use a desktop, or you could use a laptop. Tablet PCs, the likes of which existed before the iPad, were mostly used in industrial or educational settings, with limited appeal and a high cost. In 2010, that changed with the advent of Apple's iPad, which was designed for everyone and at a price of just $499 was relatively affordable.

Samsung's Galaxy Tab, Amazon's Kindle Fire and a whole host of others soon followed, all along similar lines. These devices were designed for media consumption; that big screen meant videos, comic books and magazines looked better than ever. And they were far cheaper than laptops, making them great ways to get kids and the elderly online.

These days, the tablet has gone from a tinker toy to a post-PC device that people can try to use instead of a PC. Starting in 2012, even Microsoft got in on the action, selling its Surface devices to people who want to get work done on the go. Now even regular Windows PCs without touchscreens are a rarity, showing that tablets have had a lasting influence in the industry.

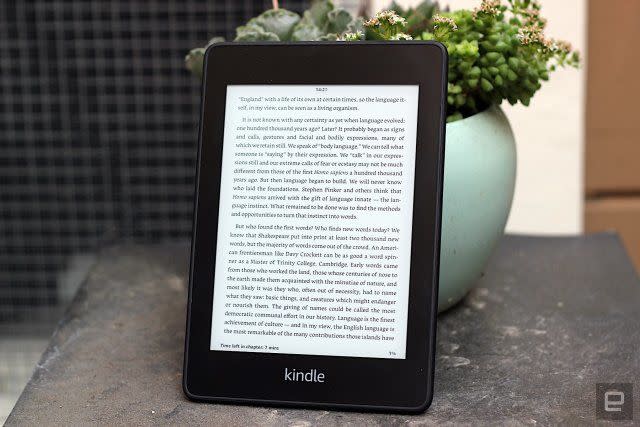

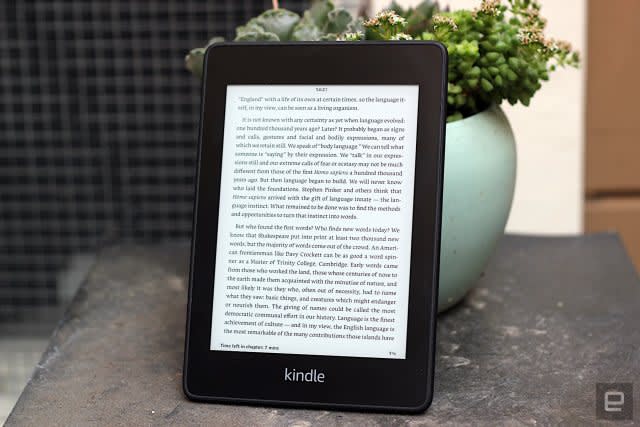

Take a thousand books on a plane

If you were ever tired of carrying around hefty tomes in your backpack, then you might've been one of those who were happy when e-readers arrived. One of the first e-readers to come on the market was the Sony Librie in 2004, which was also one of a few to incorporate electronic paper, a digital ink and display technology that had the look of the real thing and was gentler on the eyes than your smartphone or tablet.

Sony succeeded the Librie with the Reader line of e-book devices, and other rival e-readers emerged over the next few years, such as Kobo, Barnes & Noble's Nook and, of course, the Amazon Kindle, which debuted in 2007. The Kindle is without question the king of the e-readers right now, thanks to how easy it is to buy and download digital books straight from the web to the device, as long as you have an Amazon account.

Speak, and it shall be done

It's always been possible to have a home that's smarter than most; you just needed a series of remote controls to make it work. That began to change when Philips launched Hue, a smart bulb that could be controlled wirelessly. In 2015, Amazon launched Alexa with the Echo, a voice assistant and smart-speaker combo. It's possible to speak to compatible systems in your home and have them do as you command.

Now you can buy a number of smart products, from Nest's smart thermostat to Ring's video doorbell. Google didn't wait long to get its own platform, named Assistant, into a variety of products, including Google Home smart speakers. Smaller, cheaper devices and integrations with third parties would follow, and now it's hard to find a product that doesn't work with one or the other.

Those on iPhones or rocking Microsoft and Samsung products had their own voice assistants too. Siri (2011), Cortana (2014) and Bixby (2017) have their own reasons for existing, although they haven't had the mainstream success that Amazon's and Google's products have enjoyed. In fact, Samsung's offering is so disliked that the company had to allow people to remap its mobile-hardware trigger for something else.

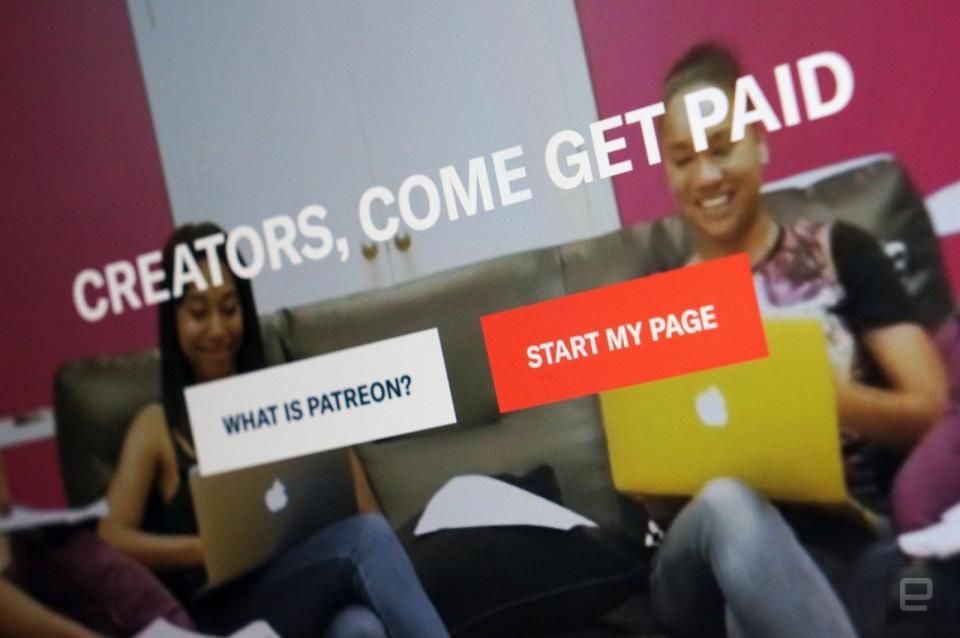

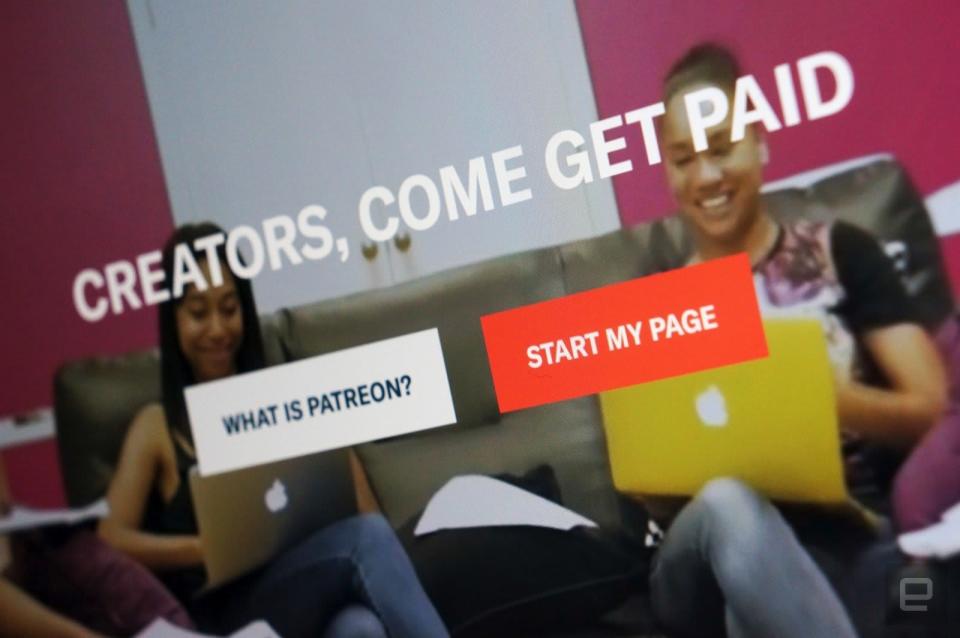

Ask the world for patronage or support

It used to be that if you wanted to start a business or embark on an expensive creative project, you needed to get a loan from a bank or have access to vast sums of wealth. These days, though, that's not necessarily the case, and it's thanks in part to the rise of online crowdfunding and digital patronage. Crowdfunding as an idea isn't new, of course, but it took sites like Kickstarter to make it as easy as clicking a button. If it weren't for the advent of Kickstarter in 2009, innovations like the Pebble smartwatch or the first Oculus headset might never have made it to consumer hands.

Crowdfunding has had its share of controversies too; the Ouya gaming console was a commercial failure, Zach Braff was criticized for seeking funding for a movie project despite his Hollywood stardom and there have been numerous projects that turned out to be shady scams. Plus, while crowdfunding was initially a tool used by small business owners and individuals to fund their passion projects, it has since evolved into a tactic by larger entities to drum up PR and early consumer interest. Kickstarter and its ilk are still helping out the former with smaller, quirkier projects, but the field is much more crowded than it was before.

Beyond crowdfunding, there are creators who have decided to go the digital-patronage route instead, with Patreon being the main enabler. Of course, Patreon appears to be designed for content creators who are churning out work on a regular basis -- like podcasters or artists -- and not quite for those who are making only one project at a time. Nevertheless, it's through this method that many creators and artists are actually making a sustainable income, which is at the heart of the original crowdfunding credo.

Crowdfunding has also become a popular way for people to meet their medical bills in countries without universal health care. Around a third of all GoFundMe projects are to pay for life-saving treatment when insurance can't, or won't, cover the cost.

Share without effort

The sharing economy is one where individuals can sell goods or services for a fee, handled by an online marketplace. One of the most obvious examples of this is in ride sharing, with apps like Uber and Lyft, which debuted in 2010 and 2012, respectively. Airbnb, which launched in 2008, enables people to do the same, letting them offer their own property (or a part of it) as a hotel room.

And being able to summon a cab from your phone and have it drive to your location is pretty amazing. With automated service and payment, it's a lot easier than fumbling for cash on a drunken night out. Not to mention that staying in someone's apartment is way more interesting than crashing in a generic hotel room when you're traveling.

These apps allowed pretty much anyone to become a cab driver with their own car, or a hotelier with their own home. The rides and stays were often cheaper than with an established business, too, and plenty of people loved sticking it to bad taxi services and pricey hotels. On the upside, there were no costs associated with extensive training or background checks. On the downside, there was no extensive training or background checks.

Uber, Lyft and Airbnb, among others, were happy, as they didn't have to maintain the gear that was being rented. But the lack of regulation meant that users had little recourse when things went wrong, and workers weren't treated as employees. Drivers could reject pickups based on race or disability, as could Airbnb renters -- both of which violate federal law. And ride-share companies are also, essentially, circumventing labor laws and not paying benefits to their drivers.

Travel with electricity

According to Nicholas Klein, first they ignore you, then they laugh at you, then they fight you, then you win. In the past two decades, electric vehicles of all sizes have gone from the first category to the third, and possibly even the fourth. The first vehicles may have been powered by batteries, but the automotive industry's received wisdom was that gas was the only one that would work. Electric cars were little more than a punchline, something that only Ed Begley Jr. and a handful of other hippies would ever care about.

The year Engadget was founded, however, Tesla Motors began working on its Roadster, an electric sports car. In the following 15 years, the company has gone from knocking out a modified Lotus Elise with an electric drivetrain to the Models S, X and 3. The company made electric vehicles sexy and inspired the automotive industry to build its own EVs.

From those humble beginnings, we now have a whole raft of electric vehicles to choose from. The Chevy Bolt, Nissan Leaf and the plug-in Prius Prime are all here, in part, thanks to Tesla. Sure, government incentives and dieselgate helped push us in this direction, but without a pioneer like Tesla, driving around in a car that you plug in rather than refuel would still be a niche.

Be more flexible

Technology is, unfortunately, still not as flexible as we need it to be. That's likely to change now (literally) that we're a couple of months away from the launch of the first commercially available folding smartphone. By the end of the year, we're also expecting to see the first rollable TVs arrive (for the select few who can afford them). But the benefits of using a flexible display are clearly obvious and are only likely to get better in future.

A smaller smartphone that folds out to a tablet when required could lighten the load in our pockets and make us more productive on the go. Flexible displays may redefine how we organize our homes if we no longer need to have unsightly TVs cluttering up the space. That's not to mention that we can redesign things like cars and cockpits to have location- and function-specific jobs.

The initial wave of devices might be a little clunky, but it points to a future in which we can all wave goodbye to rigidity. And with it come plenty of avenues for new ways to interact and engage with the future.

Written by Nicole Lee and Daniel Cooper

[Image credits: Chris Velazco, Evan Rodgers, Cherlynn Low, Brian Oh, Edgar Alvarez, Nathan Ingraham, Roberto Baldwin (Engadget); ViewApart via Getty (Social Media), NurPhoto via Getty (Google Maps), Tero Vesalainen via Getty (Streaming)]